French pronunciation assessment with Azure and my Emacs setup

| speechI'm working on learning French because it's fun and because I want to help my daughter with her French classes. Some sounds are particularly difficult because they don't exist in English, so they involve moving my tongue and my lips in ways that I'm not used to. I've been working on some sentences my tutor assigned me to help me with various sounds. I practice:

- during the 45-minute virtual sessions with my tutor twice a week: I record this with OBS

- recording on my phone when I find myself with some spare time away from my computer, like when I'm waiting for my daughter

- recording on my computer with Emacs, subed-record, and ffmpeg

- just out loud, like when I'm skating or doing the dishes

I use WhisperX to get the transcript and word timing data with speaker identification (diarization). I use subed-record in Emacs to correct the transcript, cut/split/trim audio segments, and compile them

I've been working on my processes for reviewing reference examples either from text-to-speech engines or my meeting audio, recording and reviewing my attempts, and comparing them.

I want to be able to quickly listen to my best attempts from a session, compare different versions, and keep track of my progress over time. I also want to figure out how to practice pronunciation in between sessions with my tutor while minimizing the risk of reinforcing mistakes. To segment the audio for review, I use WhisperX to get the transcript and word timing data with speaker identification (diarization). I use subed-record in Emacs to correct the transcript, cut/split/trim audio segments, and compile them. I want to make this process even easier.

Some of the sounds I'm working on are:

- /y/ as in mule and bu

- /ʁ/ as in trottoir: making it with less air, but without it feeling like an "h" instead; also, transitioning to /y/ as in brume

- /œ/ as in cœur

- distinguishing between roue and rue

Microsoft Azure pronunciation assessment

I've been thinking about how to practise more effectively in between my twice-weekly tutoring sessions. There's been a fair amount of research into computer-aided language training and pronunciation assessment, and I wonder how I can tweak my processes and interfaces to take advantage of what other people have learned. I think many apps use the confidence scores of Whisper-based speech recognition engines. Other services try to be more detailed. For example, Microsoft Azure offers a pronunciation assessment service that scores audio samples on:

- accuracy: how closely phonemes match a native speaker's pronunciation

- fluency: how closely it matches a native speaker's silent breaks between words

- completeness: whether all the words were said

- pronunciation: the total score

- confidence

Azure's syllable and phoneme analyses only work for the en-US locale, so I can't use them for fr-FR. That's okay, LLM pronunciation evaluation might work better for sentences than for words or phonemes anyway.1

I'll share my results first, and then I'll describe my workflow.

For example, here are some Azure scores for "Trois très grands trains traversent trois trop grandes rues", compared with a Kokoro TTS sample and its results when analyzing an audio segment of my teacher speaking (not included).

| Mar 3 | Bad | Kokoro | Tutor | |

| Overall | 94 | 44 | 97 | 93 |

| Accuracy | 93 | 63 | 96 | 100 |

| Fluency | 93 | 62 | 100 | 89 |

| Completeness | 100 | 33 | 100 | 100 |

| Confidence | 90 | 80 | 92 | 02 |

I'm not entirely sure how useful these numbers will be. It does distinguish between my current attempts and intentionally bad pronunciation, but I'm not sure how much I can trust it yet, or how useful it will be for guiding my attempts in between tutoring sessions. I can imagine a workflow where I listen to a reference, record my attempt, and replay the reference and the recording while displaying the scores, a waveform, and a spectrogram.

Let's look at another example of the scores. The tongue-twister "La mule sûre court plus vite que le loup fou." might offer a more useful comparison because I have a hard time with the u sound in "mule".

| Mar 3 | i with tutor |

i Kokoro |

Tutor | |

| Overall | 84 | 94 | 100 | 95 |

| Accuracy | 96 | 95 | 100 | 100 |

| Fluency | 75 | 92 | 100 | 93 |

| Completeness | 100 | 100 | 100 | 100 |

| Confidence | 84 | 85 | 89 | 87 |

Phonemes

What about trying to get the IPA and then doing some kind of comparison? I tried using Allosaurus and Montreal Forced Aligner for my audio samples for "La mule sûre court plus vite que le loup fou." I've also included IPA output from Kokoro TTS and espeak, which generate them from text, although I've removed the stress marks for easier comparison.

| Mar 3 | naɛmis̪yʁkɔpluzitɑləkœnədufu |

| with tutor (Allosaurus) | lɛns̪ikɑlovitkɛlədufu |

| with tutor (MFA) | lamylsyʁkuʁplyvitkəlølufu |

| Tutor | lamilks̪yuəs̪ikətəlufu |

| Kokoro TTS reference | la myl syʁ kuʁ ply vit kə lə lu fu. |

| Espeak TTS reference | la mjul suɹə kɔt plʌs vaɪt kwɛ lə lup fu. |

The IPA for a sentence is hard to read and compare. Maybe I can get Allosaurus to do word breaks, or maybe I can try a different tool. The phonemes from Montreal Forced Aligner look like they might be a little more manageable; I can see that the main difference is that I said lø instead of lə. I can get timing data from MFA, so I might be able to use that to break it up into words. It would be nice to get confidence data, though, since I'm pretty sure that y isn't solid yet.

Analyzing multiple tongue-twisters from a single session

Here are some tongue-twister attempts from my March 6 session, annotated with the comments from my tutor. First, the list of tongue-twisters in this analysis.

- Maman peint un grand lapin blanc.

- Un enfant intelligent mange lentement.

- Le roi croit voir trois noix.

- Il est loin mais moins loin que ce coin.

- Le témoin voit le chemin loin.

- Moins de foin au loin ce matin.

- La laine beige sèche près du collège.

- La croquette sèche dans l’assiette.

- Elle mène son frère à l’hôtel.

- Le verre vert est très clair.

- Elle aimait manger et rêver.

- Le cœur seul pleure doucement.

- Le beurre fond dans le cœur chaud.

- Tu es sûr du futur ?

- Un mur dur bloque la rue.

Then I can extract audio segments, transcribe the IPA, and send it to Azure for pronunciation analysis all in one go.

(my-subed-record-analyze-file-with-azure "~/proj/french/analysis/virelangues/2026-03-06-raphael-script.vtt")

| File | ID | Comments | All | Acc | Flu | Comp | Conf | Phonemes |

| 01-01 | 85 | 92 | 78 | 100 | 88 | lɒmɒaɒʁɔnlat̪ɒlɒ | ||

| 01-02 | Mm hmm | 88 | 86 | 86 | 100 | 87 | mamakɔaɒkul̪ɒlatalɒ | |

| 01-03 | Ouais | 68 | 73 | 69 | 67 | 86 | lɒmɒaɒpzɒlɒ | |

| 01-04 | Ouais | 81 | 78 | 89 | 83 | 86 | ɒenɒmɒt̪ɒʁɔlɒt̪alɒŋ | |

| 02-01 | Ouais, c'est bien | 92 | 93 | 90 | 100 | 90 | ɒjnɒwsɒnɛnt̪eleʒɔnmomuʃlɔns̪mɒ | |

| 03-01 | Uh huh | 98 | 100 | 97 | 100 | 88 | jal̪aʁwaɒll̪awandwa | |

| 04-01 | Ouais, parfait | 91 | 92 | 100 | 89 | 89 | ijelwamenmwdoakesekwaŋ | |

| 05-01 | X: témoin | 83 | 82 | 90 | 83 | 88 | etemwawaəʃemɒdwaŋ | |

| 05-02 | Ouais | 92 | 88 | 96 | 100 | 89 | et̪timwawaeʃemɒlwa | |

| 06-01 | Mm hmm, parfait | 89 | 93 | 94 | 86 | 89 | wɒt̪iəwozwasənmɒtɒŋ | |

| 07-01 | X: près du collège | 78 | 79 | 99 | 71 | 87 | ɒmnteʁalol̪e | |

| 07-02 | X: près | 85 | 86 | 85 | 86 | 88 | ɒjteʁatkœl̪es | |

| 07-03 | Mm hmm | 90 | 93 | 99 | 86 | 89 | ɒl̪mnteadəkol̪en | |

| 08-01 | Ouais, c'est mieux | 99 | 99 | 99 | 100 | 90 | laokes̪estɒnlas̪iə | |

| 08-02 | Mm hmm | 97 | 96 | 100 | 100 | 90 | laokes̪est̪ɒvɒnləs̪iən | |

| 09-01 | Ouais, c'est bien | 99 | 99 | 100 | 100 | 89 | ɛnmɛnsɑnsʁɛajlot̪ɛn | |

| 10-01 | Mm hmm | 87 | 88 | 99 | 83 | 87 | nɛzaʁbɛʁejklə | |

| 11-01 | Mm hmm | 100 | 100 | 100 | 100 | 89 | elɒnmimaŋzeʁɒze | |

| 12-01 | X: doucement | 81 | 86 | 81 | 80 | 87 | ikaʁapjobil̪ədimɒ | |

| 12-02 | Ouais | 82 | 83 | 76 | 100 | 86 | ikɑsuntius̪əmɑn | |

| 12-03 | Ouais, c'est mieux | 70 | 75 | 65 | 80 | 84 | lɛkɑsødius̪əmən | |

| 13-01 | Ouais | 85 | 85 | 85 | 86 | 84 | lidəfɔdɑŋlikɑʁʃəl | |

| 14-01 | Mm hmm | 97 | 96 | 98 | 100 | 87 | kel̪eid̪jefit̪joəʁ | |

| 15-01 | X: rue | 84 | 90 | 78 | 100 | 84 | ɒwl̪midijəlɔʁlɒʁs̪u | |

| 15-02 | X: rue | 84 | 87 | 88 | 83 | 83 | ɒwmjd̪il̪ɔʁnɒku |

The play buttons even work in Org Mode because I use a custom link type for audio links.

It would be useful to analyze one tongue-twister across multiple sessions to get a sense of my progress.

Focusing on one word

Here's a deep-dive on the word "mule" from my March 3 session. I exported the words using the WhisperX JSON. Both Azure speech recognition results and Allosaurus phonemes are all over the place when I try to run them on audio segments with individual words.

(my-subed-record-extract-words "mule" "/home/sacha/sync/recordings/processed/2026-03-03-raphael.json" "/home/sacha/proj/french/analysis/mule/index.vtt")

I manually adjusted some timestamps, removed some segments, and added a reference sample from Wiktionary (source, public domain). Here's the WebVTT file with the directives: file:///home/sacha/proj/french/analysis/mule/index.vtt

(my-subed-record-analyze-file-with-azure "~/proj/french/analysis/mule/index.vtt")

| File | ID | Comments | WhisperX | All | Acc | Flu | Comp | Conf | Phonemes |

| 01 | Wiktionary - France | 97 | 95 | 100 | 100 | 77 | mel | ||

| 02 | 92 | 80 | 68 | 100 | 100 | 78 | mi | ||

| 03 | 91 | 80 | 68 | 100 | 100 | 79 | nol̪ɒj | ||

| 04 | Bit of a y | 90 | 95 | 93 | 100 | 100 | 88 | nyjɔl̪ɒ | |

| 05 | Bit of a y | 89 | 88 | 80 | 100 | 100 | 83 | j | |

| 06 | 89 | 88 | 80 | 100 | 100 | 82 | muə | ||

| 07 | Bit of a y | 88 | 10 | 50 | 0 | 0 | 78 | niə | |

| 08 | 88 | 76 | 60 | 100 | 100 | 81 | mio | ||

| 09 | Bit of a y | 84 | 91 | 86 | 100 | 100 | 84 | mj | |

| 10 | Bit of a y | 84 | 11 | 56 | 0 | 0 | 77 | nj | |

| 11 | Bit of a y | 83 | 6 | 31 | 0 | 0 | 79 | jn | |

| 12 | 83 | 80 | 68 | 100 | 100 | 80 | mijə | ||

| 13 | 82 | 0 | 0 | 0 | 0 | 88 | ɒ | ||

| 14 | Got a small "oui" | 80 | 1 | 5 | 0 | 0 | 84 | ija | |

| 15 | 78 | 4 | 21 | 0 | 0 | 57 | mia | ||

| 16 | 78 | 10 | 53 | 0 | 0 | 80 | miə | ||

| 17 | 75 | 10 | 52 | 0 | 0 | 77 | we | ||

| 18 | Got a "non!" | 75 | 8 | 44 | 0 | 0 | 85 | mjən | |

| 19 | 72 | 79 | 66 | 100 | 100 | 78 | mɛ | ||

| 20 | Bit of a y | 72 | 79 | 66 | 100 | 100 | 79 | miə |

At the word level, the Azure pronunciation scores are all over the place, and so are the Allosaurus phonemes. For now, I think it might be better to tweak my interface so that I can more easily refer to the samples (text to speech or recordings extracted from my tutoring session) and compare new recordings, maybe with waveforms, spectrograms, and other plots.

I like the progress I've made on a workflow for extracting and evaluating sentences or words. I'm looking forward to seeing what I can do once I understand a bit more of the research. Let me describe the workflow I have so far.

Analyzing one tongue-twister across multiple sessions

I can also compile different versions of one tongue-twister into one file to get a sense of my progress.

(my-subed-record-analyze-file-with-azure

(my-subed-record-collect-matching-subtitles

"Le roi croit voir trois noix"

'(("~/sync/recordings/processed/2026-02-20-raphael-tongue-twisters.vtt" . "Feb 20")

("~/sync/recordings/processed/2026-02-22-virelangues-single.vtt" . "Feb 22")

("~/proj/french/recordings/2026-02-26-virelangues-script.vtt" . "Feb 26")

("~/proj/french/recordings/2026-02-27-virelangues-script.vtt" . "Feb 27")

("~/proj/french/recordings/2026-03-03-virelangues.vtt" . "Mar 3")

("~/proj/french/analysis/virelangues/2026-03-06-raphael-script.vtt" . "Mar 6"))

"~/proj/french/analysis/virelangues/Le-roi-croit-voir-trois-noix.vtt"

nil 'my-subed-simplify))

| File | ID | Comments | All | Acc | Flu | Comp | Conf | Phonemes |

| 01 | Feb 20 | 76 | 78 | 73 | 83 | 85 | ɛmiwəkɔwɑtwɑsmɑs | |

| 02 | Feb 22 | 97 | 98 | 97 | 100 | 88 | jusɒl̪vwatlɒnwa | |

| 03 | Feb 26 | 97 | 100 | 96 | 100 | 89 | əkwɒwɑtwanwɑ | |

| 04 | Feb 27 | 96 | 95 | 97 | 100 | 87 | jɛʀwɑl̪ɛvwɑtwɛnwɑ | |

| 05 | Mar 3 | 94 | 96 | 92 | 100 | 88 | juwakwɒl̪dwɑwanwɑ | |

| 06 | Mar 6: : Uh huh | 98 | 100 | 97 | 100 | 88 | jal̪aʁwaɒll̪awandwa |

Emacs Lisp code for collecting matching subtitles

(defun my-subed-record-collect-matching-subtitles (text files output-file &optional match-fn transform-fn)

"Find subtitles that match TEXT in FILES and write to OUTPUT-FILE.

FILES can be a list of filenames or a list of (FILE . NOTE) pairs.

By default, TEXT is an approximate match based on

`subed-word-data-compare-normalized-string-distance'.

If TRANSFORM-FN is specified, use that on SUBTITLE-TEXT before comparing.

If MATCH-FN is specified, use that to match instead. It will be called

with the arguments input-text and subtitle-text.

Return OUTPUT-FILE."

(subed-create-file

output-file

(seq-mapcat

(lambda (o)

(let ((media-file (subed-guess-media-file nil (expand-file-name (if (consp o) (car o) o)))))

(seq-keep

(lambda (sub)

(when transform-fn

(setf (elt sub 3) (funcall transform-fn (elt sub 3))))

(when (funcall (or match-fn 'subed-word-data-compare-normalized-string-distance) text (elt sub 3))

;; Add audio note if missing

(unless (or (subed-record-get-directive "#+AUDIO" (elt sub 4))

(null media-file))

(setf (elt sub 4)

(subed-record-set-directive "#+AUDIO" media-file (or (elt sub 4) ""))))

;; Prepend note if specified

(when (and (consp o) (cdr o))

(let ((current-note (subed-record-get-directive "#+NOTE" (elt sub 4))))

(setf (elt sub 4)

(subed-record-set-directive

"#+NOTE"

(if current-note

(concat (cdr o) ": " current-note)

(cdr o))

(or (elt sub 4) "")))))

sub))

(subed-parse-file (car o)))))

files)

t)

output-file)

Workflow

Extracting parts of the recording

I use OBS to record both my microphone and the tutor's voice. I use WhisperX to transcribe my recording with speaker diarization.

Shell script

#!/bin/zsh

WHISPER_ARGS=(${(z)WHISPER_FLAGS})

MAX_LINE_WIDTH="${MAX_LINE_WIDTH:-50}"

MODEL="${MODEL:-large-v2}"

for FILE in "$@"; do

text="${FILE%.*}.txt"

if [ -f "$text" ]; then

echo "Skipping $FILE as it's already been transcribed."

else

~/vendor/whisperx/.venv/bin/whisperx --model "$MODEL" --diarize --hf_token $HUGGING_FACE_API_KEY --language fr --align_model WAV2VEC2_ASR_LARGE_LV60K_960H --compute_type int8 --print_progress True --max_line_width $MAX_LINE_WIDTH --segment_resolution chunk --max_line_count 1 --initial_prompt "Emacs et Org Mode sont d'excellents outils. J'utilise Org-roam pour prendre des notes. Today I am recording a braindump about technical setups. C'est vraiment utile pour la productivité." "$FILE" $WHISPER_FLAGS

rm -f "${FILE%.*}.srt"

fi

done

I can review the VTT manually, but it's also useful to be able to quickly extract different attempts at the phrases or words. I added subed-record-extract-all-approximately-matching-phrases to subed-record.el so that I can generate a starting point with something like this:

(subed-record-extract-all-approximately-matching-phrases

phrases

"/home/sacha/sync/recordings/processed/2026-03-06-raphael.json"

"/home/sacha/proj/french/analysis/virelangues/2026-03-06-raphael-script.vtt")

Ideas:

- Group by speaker ID to make it possible to extract phrases even with interstitial corrections.

- Add an optional parameter that lets me append to an existing file.

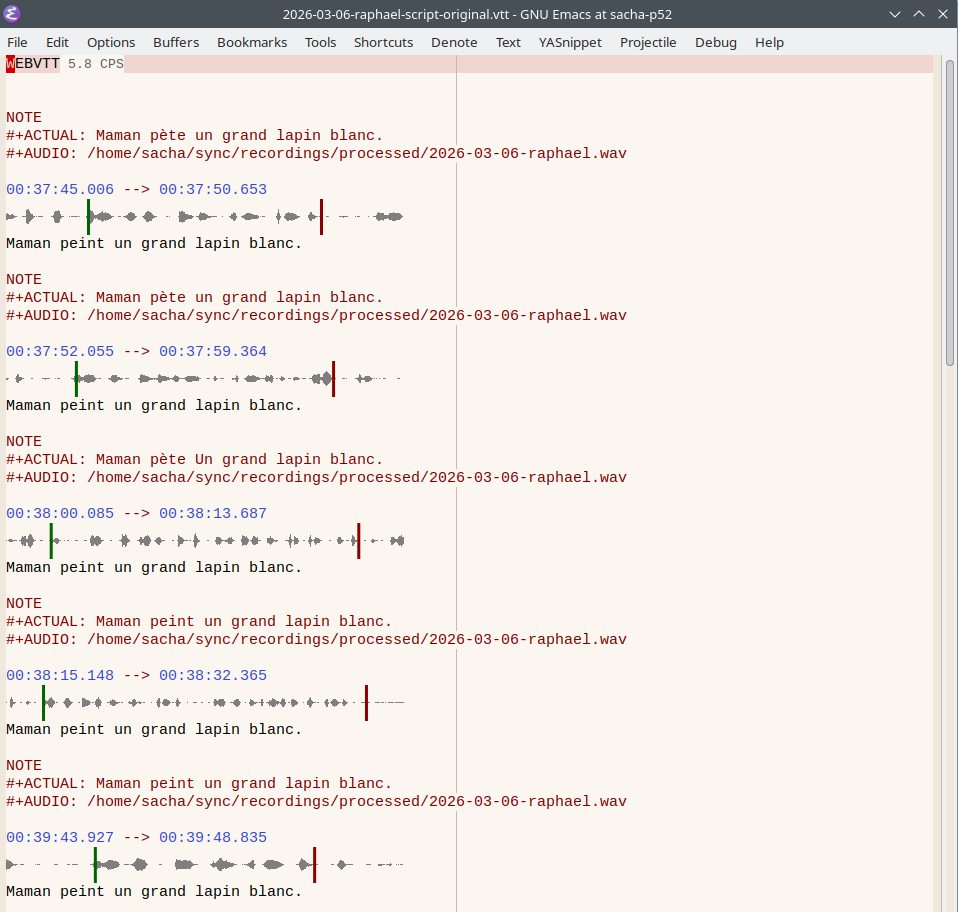

Here's a copy of its output: file:///home/sacha/proj/french/analysis/virelangues/2026-03-06-raphael-script-original.vtt

Based on the waveforms, I can see that some timestamps need to be adjusted, and some phrases may need to be duplicated, trimmed, or split. This is the file that I ended up with.

file:///home/sacha/proj/french/analysis/virelangues/2026-03-06-raphael-script.vtt

Sometimes I want to do a deep dive on a specific word. Here's another function that uses the WhisperX JSON data to extract just single words, like in my analysis of "mule".

Extracting words

(defun my-subed-record-extract-words (word word-data-file output-file)

(let ((media-file (subed-guess-media-file nil word-data-file)))

(subed-create-file

output-file

(mapcar (lambda (o)

(list nil

(alist-get 'start o)

(alist-get 'end o)

(alist-get 'text o)

(format "#+AUDIO: %s\n#+WHISPER_SCORE: %d\n#+SPEAKER: %s"

media-file

(* (alist-get 'score o) 100)

(or (alist-get 'speaker o) ""))))

(sort

(seq-filter

(lambda (o)

(subed-word-data-compare-normalized-string-distance

word

(alist-get 'text o)))

(subed-word-data-parse-file

word-data-file))

:key (lambda (o) (alist-get 'score o))

:reverse t))

t)))

Azure pronunciation assessment

I can use the following code from any subtitle of 30 seconds or less to automatically extract the audio for that subtitle and add a comment with the scores from Microsoft Azure pronunciation assessment. It needs an API key and region. I think the free tier includes 5 hours of speech each month, with additional hours priced at USD 0.66 per hour for short files less than 30 seconds (billed in 1-second increments). There's another API that can handle longer segments for USD 1.32 per hour (also included in the 5 hours free), but I'll probably need a Python or NodeJS program. Since I'm working with words and short sentences for now, I can use the REST API.

(defvar my-subed-record-azure-assessment nil)

(defvar my-subed-record-azure-assess-pronunciation-lang "fr-FR")

;; (my-subed-record-azure-assess-pronunciation "~/proj/french/analysis/mule/ref-france-sample.wav" "mule")

(defun my-azure-assess-pronunciation (audio-file reference-text)

"Send AUDIO-FILE to Azure for pronunciation assessment against REFERENCE-TEXT.

Needs the AZURE_SPEECH_REGION and AZURE_SPEECH_KEY environment variables."

(interactive (list (read-file-name "Audio: ")

(read-string "Text: ")))

(let* ((url (format "https://%s.stt.speech.microsoft.com/speech/recognition/conversation/cognitiveservices/v1?language=%s&format=detailed"

(getenv "AZURE_SPEECH_REGION")

(url-hexify-string my-subed-record-azure-assess-pronunciation-lang)))

;; 1. Create the Configuration JSON

(config-json (json-encode `(("ReferenceText" . ,reference-text)

("GradingSystem" . "HundredMark")

("Granularity" . "Phoneme")

("Dimension" . "Comprehensive"))))

;; 2. Base64 encode the config

(config-base64 (base64-encode-string (encode-coding-string config-json 'utf-8) t))

;; 3. Prepare headers

(headers `(("Ocp-Apim-Subscription-Key" . ,(getenv "AZURE_SPEECH_KEY"))

("Pronunciation-Assessment" . ,config-base64)

("Content-Type" . "audio/wav; codecs=audio/pcm; samplerate=16000")))

(data (plz 'post url

:headers headers

:body `(file ,audio-file)

:as #'json-read)))

(when (called-interactively-p 'any)

(kill-new (my-subed-record-azure-format-assessment data))

(message "%s" (my-subed-record-azure-format-assessment data)))

data))

(defun my-subed-record-azure-assess-pronunciation (&optional beg end)

"Assess the pronunciation of the current cue."

(interactive (if (region-active-p)

(list (region-beginning)

(region-end))

(list (subed-subtitle-start-pos)

(save-excursion

(subed-jump-to-subtitle-end)

(point)))))

(subed-for-each-subtitle beg end t

(let* ((temp-file (make-temp-file "subed-record-azure" nil ".wav"))

(text (my-subed-simplify (subed-subtitle-text)))

data

score)

(subed-record-extract-audio-for-current-subtitle-to-file temp-file)

(setq data

(my-azure-assess-pronunciation

temp-file

text))

(when data

(subed-record-set-directive

"#+SCORE"

(my-subed-record-azure-format-assessment data))

(setq my-subed-record-azure-assessment data))

(delete-file temp-file)

data)))

(defun my-subed-record-azure-format-assessment (&optional data)

(setq data (or data my-subed-record-azure-assessment))

(let-alist (car (alist-get 'NBest data))

(format "%d; A: %d, F: %d, C: %d, Conf: %d"

(or .PronunciationAssessment.PronScore .PronScore)

(or .PronunciationAssessment.AccuracyScore .AccuracyScore)

(or .PronunciationAssessment.FluencyScore .FluencyScore)

(or .PronunciationAssessment.CompletenessScore .CompletenessScore)

(* 100.0 (or .PronunciationAssessment.Confidence

.Confidence)))))

Phonemes with Allosaurus, Espeak, or Kokoro FastAPI

(defvar my-subed-record-allosaurus-command '("/home/sacha/proj/french/.venv/bin/python3" "-m" "allosaurus.run" "--lang" "fra" "-i"))

(defun my-subed-record-allosaurus-phonemes (&optional beg end)

(interactive (if (region-active-p)

(list (region-beginning)

(region-end))

(list (subed-subtitle-start-pos)

(save-excursion

(subed-jump-to-subtitle-end)

(point)))))

(subed-for-each-subtitle beg end t

(let* ((temp-file (make-temp-file "subed-record-allosaurus" nil ".wav")))

(subed-record-extract-audio-for-current-subtitle-to-file temp-file)

(subed-record-set-directive

"#+PHONEMES"

(with-temp-buffer

(apply #'call-process (car my-subed-record-allosaurus-command)

nil t nil

(append

(cdr my-subed-record-allosaurus-command)

(list temp-file)))

(delete-file temp-file)

(replace-regexp-in-string " " "" (buffer-string)))))))

(defun my-lang-espeak-ng-phonemes (text)

(interactive "MText: ")

(let ((data

(with-temp-buffer

(call-process "espeak" nil t nil "-q" "--ipa" text)

(string-trim (buffer-string)))))

(when (called-interactively-p 'any)

(kill-new data)

(message "%s" data))

text))

(defun my-french-kokoro-fastapi-phonemes (s)

(interactive "MText: ")

(my-kokoro-fastapi-ensure)

(let ((data (alist-get 'phonemes

(plz 'post "http://localhost:8880/dev/phonemize"

:headers '(("Content-Type" . "application/json"))

:body (json-encode `((text . ,s)

(language . "fr-fr")))

:as #'json-read))))

(when (called-interactively-p 'any)

(kill-new data)

(message "%s" data))

data))

Splitting into segments and making a table

(defun my-subed-record-make-groups (subtitles)

"Come up with a good ID for attempts, grouping by cue text."

(let* ((group-num 0)

(sub-num 0)

(grouped ; ((text . (start start start)) ...)

(mapcar

(lambda (o)

(cons (car o)

(mapcar

(lambda (sub) (elt sub 1))

(cdr o))))

(seq-group-by

(lambda (o) (elt o 3))

(seq-remove (lambda (o)

(and (elt o 4)

(string-match "#\\+SKIP" (elt o 4)))) subtitles))))

(one-group (= (length grouped) 1)))

(seq-mapcat

(lambda (o)

(setq group-num (1+ group-num))

(unless one-group (setq sub-num 0))

(seq-map

(lambda (sub-start)

(setq sub-num (1+ sub-num))

(cons sub-start (if one-group

(format "%02d" sub-num)

(format "%02d-%02d" group-num sub-num))))

(cdr o)))

grouped)))

(defun my-subed-record-analyze-file-with-azure (vtt &optional always-create filter)

(with-current-buffer (find-file-noselect vtt)

(let* (results

filename

(ids (my-subed-record-make-groups

(seq-filter

(lambda (o)

(if filter

(string-match filter (elt o 3))

'identity))

(subed-subtitle-list))))

id)

(subed-for-each-subtitle (point-min) (point-max) t

(unless (and (subed-subtitle-comment)

(string-match "#\\+SKIP" (subed-subtitle-comment))

(or (null filter)

(string-match filter (subed-subtitle-text))))

(setq id (alist-get (subed-subtitle-msecs-start) ids))

(setq filename (expand-file-name

(format "%s-%s.opus"

(file-name-base vtt)

id)

(file-name-directory vtt)))

(when (or always-create (not (file-exists-p filename))) (subed-record-extract-audio-for-current-subtitle-to-file filename))

(unless (string-match (regexp-quote "#+SCORE") (subed-subtitle-comment))

(my-subed-record-azure-assess-pronunciation))

(unless (string-match (regexp-quote "#+PHONEMES") (subed-subtitle-comment))

(my-subed-record-allosaurus-phonemes))

(let* ((comment (subed-subtitle-comment))

(scores (mapcar (lambda (o)

(if (string-match (concat (regexp-quote o) ": \\([0-9]+\\)") comment)

(match-string 1 comment)

""))

'("#+WHISPER_SCORE" "#+SCORE" "A" "F" "C" "Conf")))

(phonemes (when (string-match "#\\+PHONEMES: \\(.+\\)" comment)

(match-string 1 comment)))

(text (subed-subtitle-text))

(extra-comment (when (string-match "#\\+NOTE: \\(.+\\)" comment) (match-string 1 comment))))

(push

(append

(list (org-link-make-string (format "audio:%s?icon=t" filename) "▶️")

id

(or extra-comment ""))

scores

(list

(org-link-make-string (concat "abbr:" text) phonemes)))

results))))

(setq results

(cons

'("File" "ID" "Comments" "WhisperX" "All" "Acc" "Flu" "Comp" "Conf" "Phonemes")

results))

(my-org-table-remove-blank-columns results t))))

(defun my-org-table-remove-blank-columns (data &optional has-header)

"Remove blank columns from DATA.

Skip the first line if HAS-HEADER is non-nil."

(cl-loop

for i from (1- (length (car results))) downto 1

do (unless (delq nil

(mapcar

(lambda (o)

(and (elt o i)

(not (string= (elt o i) ""))))

(if has-header

(cdr data)

data)))

(setq data

(mapcar

(lambda (o)

(seq-remove-at-position o i))

data))))

data)

Collecting segments from multiple sessions

First I collect a sample from different files