Comparing pronunciation recordings across time

Posted: - Modified: | french, emacs, org, subed- : Added reconstructions from today and moved more of the code to sachac/learn-lang.

- : Added updates from today.

- : Added reference audio for the second set.

- : I added pronunciation segments for the new set of tongue-twisters I got on Mar 13.

- : I added a column for Feb 20, the first session with the sentences. I also added keyboard shortcuts (1..n) for playing the audio of the row that the mouse is on.

2026-02-20: First set: Maman peint un grand lapin blanc, etc.

My French tutor gave me a list of sentences to help me practise pronunciation.

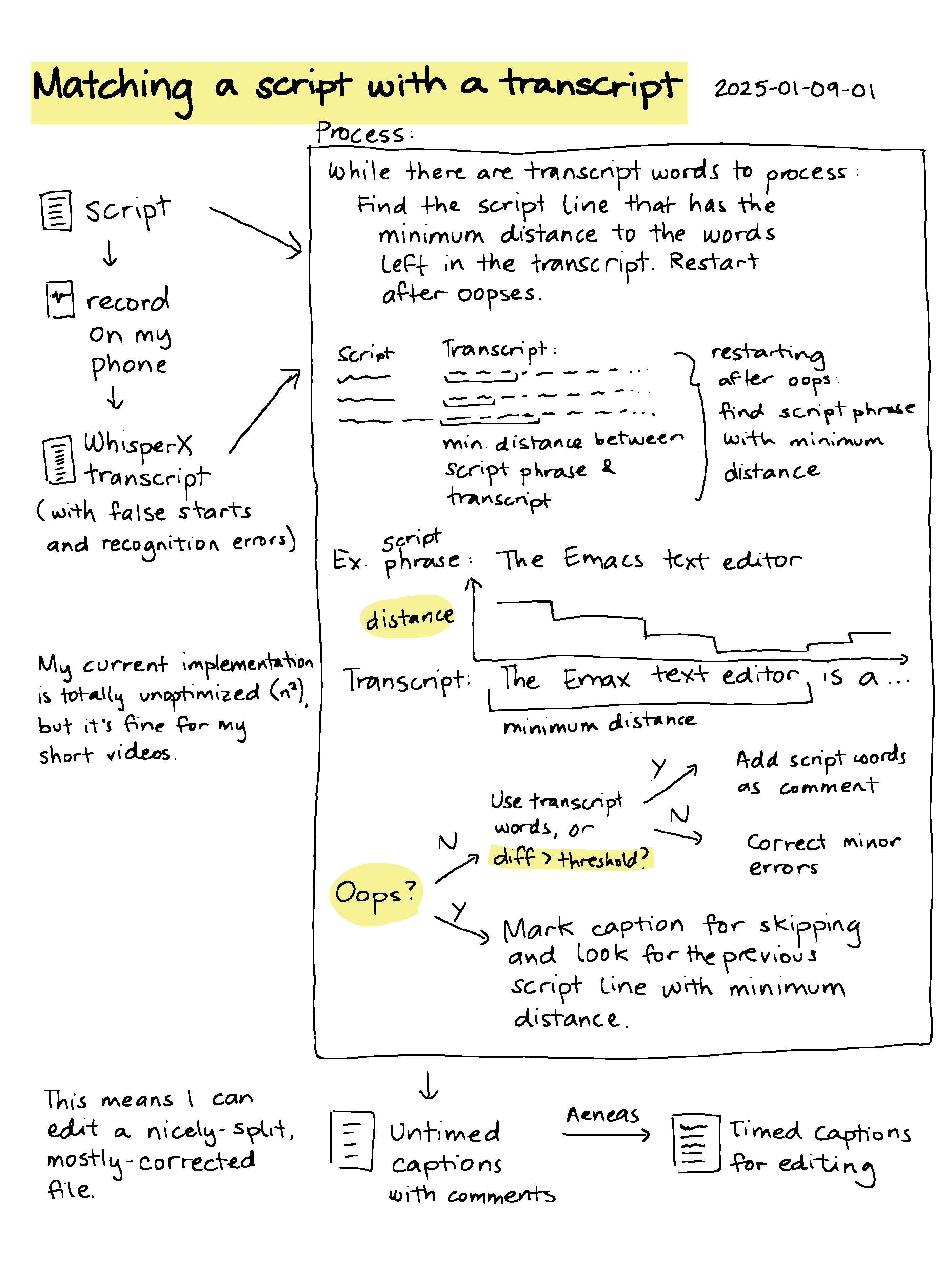

I can fuzzy-match these with the word timing JSON from WhisperX, like this.

Extract all approximately matching phrases

(subed-record-extract-all-approximately-matching-phrases

sentences

"/home/sacha/sync/recordings/2026-02-20-raphael.json"

"/home/sacha/proj/french/analysis/virelangues/2026-02-20-raphael-script.vtt")

Sentences

- Maman peint un grand lapin blanc.

- Un enfant intelligent mange lentement.

- Le roi croit voir trois noix.

- Le témoin voit le chemin loin.

- Moins de foin au loin ce matin.

- La laine beige sèche près du collège.

- La croquette sèche dans l'assiette.

- Elle mène son frère à l'hôtel.

- Le verre vert est très clair.

- Elle aimait manger et rêver.

- Le jeu bleu me plaît peu.

- Ce neveu veut un jeu.

- Le feu bleu est dangereux.

- Le beurre fond dans le cœur chaud.

- Les fleurs de ma sœur sentent bon.

- Le hibou sait où il va.

- L'homme fort mord la pomme.

- Le sombre col tombe.

- L'auto saute au trottoir chaud.

- Le château d'en haut est beau.

- Le cœur seul pleure doucement.

- Tu es sûr du futur ?

- Trois très grands trains traversent trois trop grandes rues.

- Je veux deux feux bleus, mais la reine préfère la laine beige.

- Vincent prend un bain en chantant lentement.

- La mule sûre court plus vite que le loup fou.

- Luc a bu du jus sous le pont où coule la boue.

- Le frère de Robert prépare un rare rôti rouge.

- La mule court autour du mur où hurle le loup.

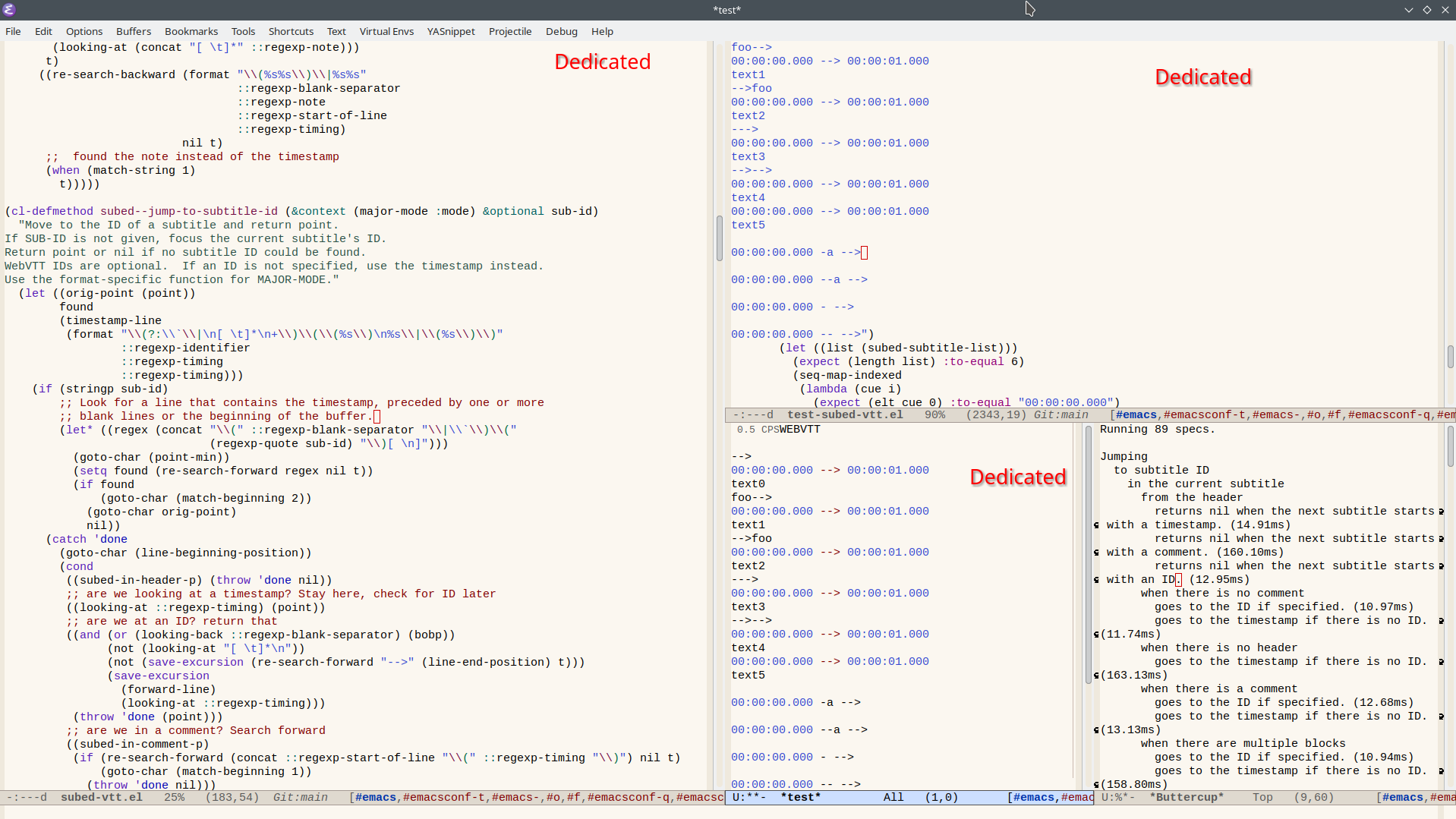

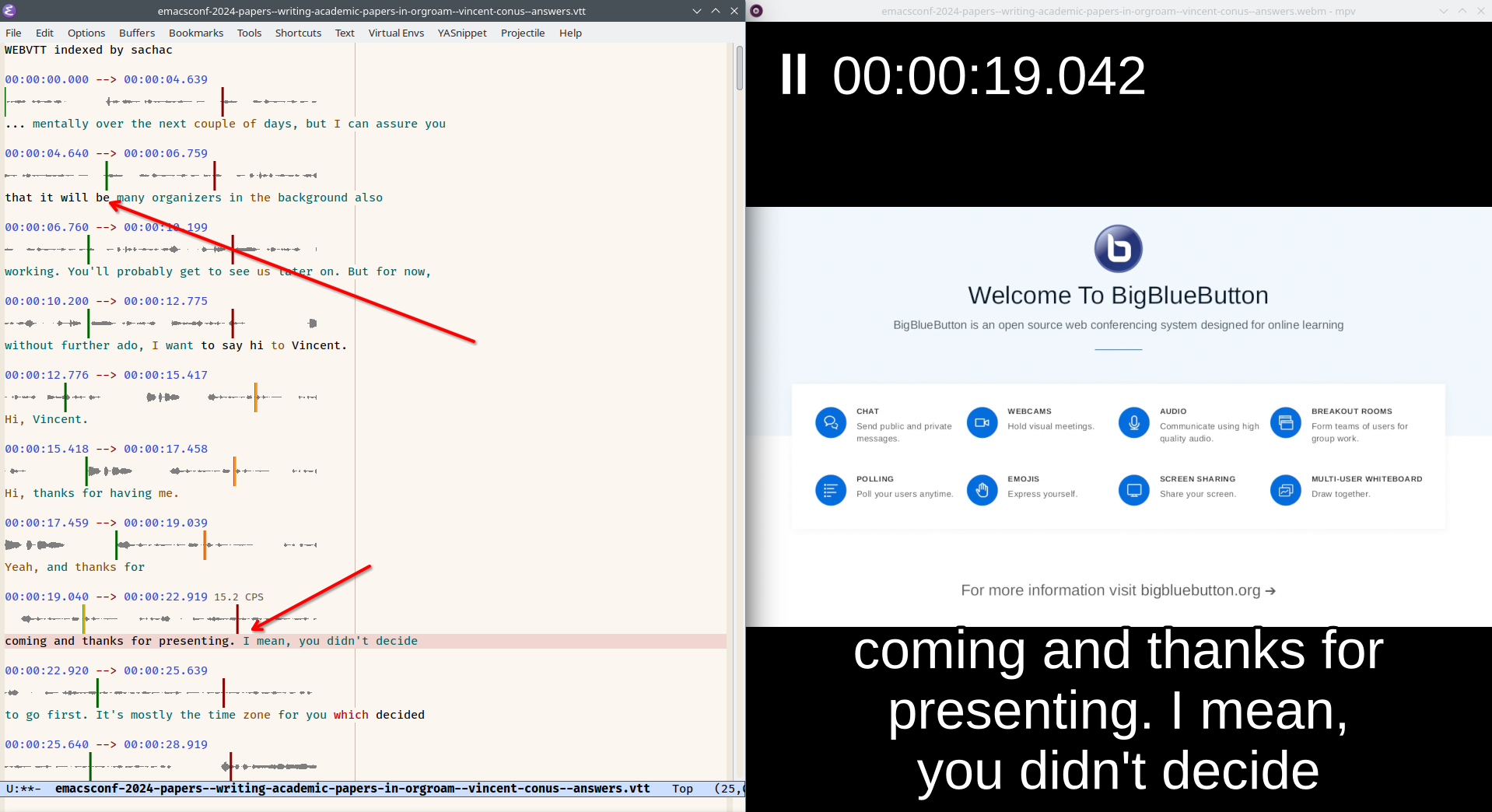

Then I can use subed-record to manually tweak them, add notes, and so on. I end up with VTT files like 2026-03-06-raphael-script.vtt. I can assemble the snippets for a session into a single audio file, like this:

I wanted to compare my attempts over time, so I wrote some code to use Org Mode and subed-record to build a table with little audio players that I can use both within Emacs and in the exported HTML.

This collects just the last attempts for each sentence during a number of my sessions (both with the tutor and on my own). The score is from the Microsoft Azure pronunciation assessment service. I'm not entirely sure about its validity yet, but I thought I'd add it for fun. * indicates where I've added some notes from my tutor, which should be available as a title attribute on hover. (Someday I'll figure out a mobile-friendly way to do that.)

Calling it with my sentences and files

(learn-lang-subed-record-summarize-segments

sentences

'(("/home/sacha/proj/french/analysis/virelangues/2026-02-20-raphael-script.vtt" . "Feb 20")

;("~/sync/recordings/processed/2026-02-20-raphael-tongue-twisters.vtt" . "Feb 20")

("~/sync/recordings/processed/2026-02-22-virelangues-single.vtt" . "Feb 22")

("~/proj/french/recordings/2026-02-26-virelangues-script.vtt" . "Feb 26")

("~/proj/french/recordings/2026-02-27-virelangues-script.vtt" . "Feb 27")

("~/proj/french/recordings/2026-03-03-virelangues.vtt" . "Mar 3")

("/home/sacha/sync/recordings/processed/2026-03-03-raphael-reference-script.vtt" . "Mar 3")

("~/proj/french/analysis/virelangues/2026-03-06-raphael-script.vtt" . "Mar 6")

("~/proj/french/analysis/virelangues/2026-03-12-virelangues-script.vtt" . "Mar 12"))

"~/proj/french/analysis/virelangues/clip"

#'learn-lang-subed-record-get-last-attempt

)

| Feb 20 | Feb 22 | Feb 26 | Feb 27 | Mar 3 | Mar 3 | Mar 6 | Mar 12 | Text |

|---|---|---|---|---|---|---|---|---|

| ▶️ 63* | ▶️ 96 | ▶️ 95 | ▶️ 94 | ▶️ 83 | ▶️ 83* | ▶️ 81* | ▶️ 88 | Maman peint un grand lapin blanc. |

| ▶️ 88* | ▶️ 95 | ▶️ 99 | ▶️ 99 | ▶️ 96 | ▶️ 89* | ▶️ 92* | ▶️ 83 | Un enfant intelligent mange lentement. |

| ▶️ 84* | ▶️ 97 | ▶️ 97 | ▶️ 96 | ▶️ 94 | ▶️ 95* | ▶️ 98* | ▶️ 99 | Le roi croit voir trois noix. |

| ▶️ 80* | ▶️ 85 | ▶️ 77 | ▶️ 94 | ▶️ 97 | ▶️ 92* | ▶️ 88 | Le témoin voit le chemin loin. | |

| ▶️ 72* | ▶️ 97 | ▶️ 95 | ▶️ 77 | ▶️ 92 | ▶️ 89* | ▶️ 86 | Moins de foin au loin ce matin. | |

| ▶️ 79* | ▶️ 95 | ▶️ 76 | ▶️ 95 | ▶️ 76 | ▶️ 90* | ▶️ 90* | ▶️ 79 | La laine beige sèche près du collège. |

| ▶️ 67* | ▶️ 99 | ▶️ 85 | ▶️ 81 | ▶️ 85 | ▶️ 99* | ▶️ 97* | ▶️ 97 | La croquette sèche dans l'assiette. |

| ▶️ 88* | ▶️ 99 | ▶️ 100 | ▶️ 100 | ▶️ 98 | ▶️ 100* | ▶️ 99* | ▶️ 100 | Elle mène son frère à l'hôtel. |

| ▶️ 77* | ▶️ 87 | ▶️ 99 | ▶️ 93 | ▶️ 87 | ▶️ 87* | ▶️ 99 | Le verre vert est très clair. | |

| ▶️ 100* | ▶️ 94 | ▶️ 100 | ▶️ 99 | ▶️ 99 | ▶️ 99* | ▶️ 100* | ▶️ 100 | Elle aimait manger et rêver. |

| ▶️ 78* | ▶️ 98 | ▶️ 99 | ▶️ 98 | ▶️ 98 | ▶️ 92* | ▶️ 88 | Le jeu bleu me plaît peu. | |

| ▶️ 78* | ▶️ 97 | ▶️ 85 | ▶️ 95 | ▶️ 85 | ▶️ 85 | Ce neveu veut un jeu. | ||

| ▶️ 73* | ▶️ 95 | ▶️ 95 | ▶️ 96 | ▶️ 97 | ▶️ 100 | Le feu bleu est dangereux. | ||

| ▶️ 87* | ▶️ 76 | ▶️ 65 | ▶️ 97 | ▶️ 85 | ▶️ 74* | ▶️ 85* | ▶️ 96 | Le beurre fond dans le cœur chaud. |

| ▶️ 84* | ▶️ 43 | ▶️ 85 | ▶️ 79 | ▶️ 75 | ▶️ 98 | Les fleurs de ma sœur sentent bon. | ||

| ▶️ 70* | ▶️ 86 | ▶️ 79 | ▶️ 76 | ▶️ 87 | ▶️ 84 | ▶️ 98 | Le hibou sait où il va. | |

| ▶️ 92* | ▶️ 95 | ▶️ 86 | ▶️ 92 | ▶️ 98 | ▶️ 99* | ▶️ 94 | L'homme fort mord la pomme. | |

| ▶️ 83* | ▶️ 73 | ▶️ 69 | ▶️ 81 | ▶️ 60 | ▶️ 96* | ▶️ 81 | Le sombre col tombe. | |

| ▶️ 39* | ▶️ 49 | ▶️ 69 | ▶️ 56 | ▶️ 69 | ▶️ 96* | ▶️ 94 | L'auto saute au trottoir chaud. | |

| ▶️ 82 | ▶️ 84 | ▶️ 85 | ▶️ 98 | ▶️ 94 | ▶️ 96* | ▶️ 99 | Le château d'en haut est beau. | |

| ▶️ 89 | ▶️ 85 | ▶️ 75 | ▶️ 91 | ▶️ 52 | ▶️ 75* | ▶️ 70* | ▶️ 98 | Le cœur seul pleure doucement. |

| ▶️ 98* | ▶️ 99 | ▶️ 99 | ▶️ 95 | ▶️ 93* | ▶️ 97* | ▶️ 99 | Tu es sûr du futur ? | |

| ▶️ 97 | ▶️ 93 | ▶️ 92 | ▶️ 85* | ▶️ 90 | Trois très grands trains traversent trois trop grandes rues. | |||

| ▶️ 94 | ▶️ 85 | ▶️ 97 | ▶️ 82* | ▶️ 92 | Je veux deux feux bleus, mais la reine préfère la laine beige. | |||

| ▶️ 91 | ▶️ 79 | ▶️ 87 | ▶️ 82* | ▶️ 94 | Vincent prend un bain en chantant lentement. | |||

| ▶️ 89 | ▶️ 91 | ▶️ 91 | ▶️ 84* | ▶️ 92 | La mule sûre court plus vite que le loup fou. | |||

| ▶️ 91 | ▶️ 93 | ▶️ 93 | ▶️ 92* | ▶️ 96 | Luc a bu du jus sous le pont où coule la boue. | |||

| ▶️ 88 | ▶️ 71 | ▶️ 94 | ▶️ 86* | ▶️ 92 | Le frère de Robert prépare un rare rôti rouge. | |||

| ▶️ 81 | ▶️ 84 | ▶️ 88 | ▶️ 67* | ▶️ 94 | La mule court autour du mur où hurle le loup. |

Pronunciation still feels a bit hit or miss. Sometimes I say a sentence and my tutor says "Oui," and then I say it again and he says "Non, non…" The /ʁ/ and /y/ sounds are hard.

I like seeing these compact links in an Org Mode table and being able to play them, thanks to my custom audio link type. It should be pretty easy to write a function that lets me use a keyboard shortcut to play the audio (maybe using the keys 1-9?) so that I can bounce between them for comparison.

If I screen-share from Google Chrome, I can share the tab with audio, so my tutor can listen to things at the same time. Could be fun to compare attempts so that I can try to hear the differences better. Hmm, actually, let's try adding keyboard shortcuts that let me use 1-8, n/p, and f/b to navigate and play audio. Mwahahaha! It works!

2026-03-14: Second set: Mon oncle peint un grand pont blanc, etc.

Update 2026-03-14: My tutor gave me a new set of tongue-twisters. When I'm working on my own, I find it helpful to loop over an audio reference with a bit of silence after it so that I can repeat what I've heard. I have several choices for reference audio:

- I can generate an audio file using text-to-speech, like a local instance of Kokoro TTS, or a hosted service like Google Translate (via gtts-cli), ElevenLabs, or Microsoft Azure.

- I can extract a recording of my tutor from one of my sessions.

- I can extract a recording of myself from one of my tutoring sessions where my tutor said that the pronunciation is alright.

Here I stumble through the tongue-twisters. I've included reference audio from Kokoro, gtts, and ElevenLabs for comparison.

(my-subed-record-analyze-file-with-azure-and-references

(subed-record-keep-last

(subed-record-filter-skips

(subed-parse-file

"/home/sacha/proj/french/analysis/virelangues/2026-03-13-raphael-script.vtt")))

"~/proj/french/analysis/virelangues-2026-03-13/2026-03-13-clip")

| Gt | Kk | Az | Me | ID | Comments | All | Acc | Flu | Comp | Conf | |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 1 | X: pont | 93 | 99 | 90 | 100 | 86 | Mon oncle peint un grand pont blanc. {pont} |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 2 | C'est mieux | 68 | 75 | 80 | 62 | 87 | Un singe malin prend un bon raisin rond. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 3 | Ouais, c'est ça | 83 | 94 | 78 | 91 | 89 | Dans le vent du matin, mon chien sent un bon parfum. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 4 | ok | 75 | 86 | 63 | 100 | 89 | Le soin du roi consiste à joindre chaque coin du royaume. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 5 | Ouais, c'est ça, parfait | 83 | 94 | 74 | 100 | 88 | Dans un coin du bois, le roi voit trois points noirs. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 6 | Ouais, parfait | 90 | 92 | 87 | 100 | 86 | Le feu de ce vieux four chauffe peu. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 7 | Ouais | 77 | 85 | 88 | 71 | 86 | Deux peureux veulent un peu de feu. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 8 | 77 | 78 | 75 | 83 | 85 | Deux vieux bœufs veulent du beurre. | |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 9 | Ouais, parfait | 92 | 94 | 89 | 100 | 89 | Elle aimait marcher près de la rivière. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 10 | Ok, c'est bien | 93 | 98 | 89 | 100 | 90 | Je vais essayer de réparer la fenêtre. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 11 | Okay | 83 | 87 | 76 | 100 | 89 | Le bébé préfère le lait frais. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 12 | 77 | 92 | 70 | 86 | 90 | Charlotte cherche ses chaussures dans la chambre. | |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 13 | Okay | 91 | 90 | 94 | 91 | 88 | Un chasseur sachant chasser sans son chien est-il un bon chasseur ? |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 14 | Ouais | 91 | 88 | 92 | 100 | 91 | Le journaliste voyage en janvier au Japon. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 15 | C'est bien (X: dans un) | 91 | 88 | 94 | 100 | 88 | Georges joue du jazz dans un grand bar. {dans un} |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 16 | C'est bien | 88 | 87 | 94 | 88 | 85 | Un jeune joueur joue dans le grand gymnase. |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 17 | 95 | 94 | 96 | 100 | 91 | Le compagnon du montagnard soigne un agneau. | |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 18 | 85 | 88 | 84 | 86 | 89 | La cigogne soigne l’agneau dans la campagne. | |

| 👂🏼 | 👂🏼 | 👂🏼 | ▶️ | 19 | grenouille | 71 | 80 | 68 | 75 | 86 | La grenouille fouille les feuilles dans la broussaille. |

And comparing clips over time:

(learn-lang-subed-record-summarize-segments

(split-string (org-file-contents "~/proj/french/analysis/virelangues-2026-03-13/phrases.txt") "\n")

'(("/home/sacha/proj/french/analysis/virelangues/2026-03-13-raphael-script.vtt" . "Mar 13")

("/home/sacha/proj/french/analysis/virelangues-2026-03-13/2026-03-20-raphael-script.vtt" . "Mar 20")

("/home/sacha/proj/french/analysis/virelangues-2026-03-13/2026-03-24-raphael-script.vtt" . "Mar 24")

("/home/sacha/proj/french/analysis/virelangues-2026-03-13/2026-03-27-script.vtt" . "Mar 27"))

"~/proj/french/analysis/virelangues-2026-03-13/all-"

#'learn-lang-subed-record-get-last-attempt)

| Mar 13 | Mar 20 | Mar 24 | Mar 27 | Text |

|---|---|---|---|---|

| ▶️ 93* | ▶️ 89 | ▶️ 71* | ▶️ 88 | Mon oncle peint un grand pont blanc. |

| ▶️ 68* | ▶️ 82* | ▶️ 90* | ▶️ 91 | Un singe malin prend un bon raisin rond. |

| ▶️ 83* | ▶️ 89* | ▶️ 93* | ▶️ 95 | Dans le vent du matin, mon chien sent un bon parfum. |

| ▶️ 75* | ▶️ 84* | ▶️ 86* | ▶️ 90 | Le soin du roi consiste à joindre chaque coin du royaume. |

| ▶️ 83* | ▶️ 88* | ▶️ 96 | ▶️ 97 | Dans un coin du bois, le roi voit trois points noirs. |

| ▶️ 90* | ▶️ 90* | ▶️ 90* | ▶️ 99 | Le feu de ce vieux four chauffe peu. |

| ▶️ 77* | ▶️ 77* | ▶️ 84* | ▶️ 89 | Deux peureux veulent un peu de feu. |

| ▶️ 77 | ▶️ 80* | ▶️ 71* | ▶️ 52 | Deux vieux bœufs veulent du beurre. |

| ▶️ 92* | ▶️ 88* | ▶️ 100* | ▶️ 96 | Elle aimait marcher près de la rivière. |

| ▶️ 93* | ▶️ 87* | ▶️ 98* | ▶️ 94 | Je vais essayer de réparer la fenêtre. |

| ▶️ 83* | ▶️ 85* | ▶️ 93* | ▶️ 97 | Le bébé préfère le lait frais. |

| ▶️ 77 | ▶️ 78* | ▶️ 88* | ▶️ 87 | Charlotte cherche ses chaussures dans la chambre. |

| ▶️ 91* | ▶️ 92* | ▶️ 97* | ▶️ 92 | Un chasseur sachant chasser sans son chien est-il un bon chasseur ? |

| ▶️ 91* | ▶️ 84* | ▶️ 88* | ▶️ 91 | Le journaliste voyage en janvier au Japon. |

| ▶️ 91* | ▶️ 79* | ▶️ 95 | Georges joue du jazz dans un grand bar. | |

| ▶️ 88* | ▶️ 99* | ▶️ 90* | ▶️ 96 | Un jeune joueur joue dans le grand gymnase. |

| ▶️ 95 | ▶️ 95 | ▶️ 94* | ▶️ 93 | Le compagnon du montagnard soigne un agneau. |

| ▶️ 85 | ▶️ 63* | ▶️ 86* | ▶️ 100 | La cigogne soigne l'agneau dans la campagne. |

| ▶️ 71* | ▶️ 64* | ▶️ 79* | ▶️ 91 | La grenouille fouille les feuilles dans la broussaille. |

| ▶️ 66* | ▶️ 79* | ▶️ 73 | La vieille abeille travaille dans la broussaille. | |

| ▶️ 96* | ▶️ 90* | ▶️ 95 | Le juge Jean juge justement les jolis bijoux de Julie. | |

| ▶️ 92* | ▶️ 93 | Ma compagne m'accompagne à la campagne avec une autre compagne. | ||

| ▶️ 82 | Une tortue têtue marche dessus sous une pluie continue. | |||

| ▶️ 76 | Une mule têtue pousse une roue lourde sur une rue boueuse. | |||

| ▶️ 73 | Trois gros rats gris grimpent dans trois grands greniers rugueux. | |||

| ▶️ 84 | Un professeur rigoureux corrige un rapport sur une erreur rare. |

The code

Code for summarizing the segments

(defun my-subed-record-analyze-file-with-azure-and-references (subtitles prefix &optional always-create)

(cons

'("Gt" "Kk" "Az" "Me" "ID" "Comments" "All" "Acc" "Flu" "Comp" "Conf")

(seq-map-indexed

(lambda (sub i)

(let ((sound-file (expand-file-name (format "%s-%02d.opus"

prefix

(1+ i))))

(tts-services

'(("gtts" . learn-lang-tts-gtts-say)

("kokoro" . learn-lang-tts-kokoro-fastapi-say)

("azure" . learn-lang-tts-azure-say)))

tts-files

(note (subed-record-get-directive "#+NOTE" (elt sub 4))))

(when (or always-create (not (file-exists-p sound-file)))

(subed-record-extract-audio-for-current-subtitle-to-file sound-file sub))

(setq

tts-files

(mapcar

(lambda (row)

(let ((reference (format "%s-%s-%02d.opus" prefix (car row) (1+ i) )))

(when (or always-create (not (file-exists-p reference)))

(funcall (cdr row)

(subed-record-simplify (elt sub 3))

'sync

reference))

(org-link-make-string

(concat "audio:" reference "?icon=t¬e=" (url-hexify-string (car row)))

"👂🏼")))

tts-services))

(append

tts-files

(list

(org-link-make-string

(concat "audio:" sound-file "?icon=t"

(format "&source-start=%s" (elt sub 1))

(if (and note (not (string= note "")))

(format "&title=%s"

(url-hexify-string note))

""))

"▶️")

(format "%d" (1+ i))

(or note ""))

(learn-lang-azure-subed-record-parse (elt sub 4))

(list

(elt sub 3)))))

subtitles)))

Jumping to the source again:

(defun my-subed-record-org-clip-view-source ()

(interactive)

(let* ((params

(url-parse-query-string

(cdr

(url-path-and-query

(url-generic-parse-url

(org-element-property :raw-link (org-element-context))))))))

(find-file (car (alist-get "source-file" params nil nil #'string=)))

(subed-jump-to-subtitle-id-at-msecs

(string-to-number (car (alist-get "source-start" params nil nil #'string=))))))

I've moved the rest of the code to sachac/learn-lang on Codeberg.