Du 4 mai au 10 mai

| frenchlundi 4

J'ai discuté des finances avec ma sœur qui habite aux Pays-Bas. Elle ne peut pas virer l'argent des Philippines aux Pays-Bas, donc je dois l'aider.

J'ai emmené ma fille à son cours de gymnastique. Ça lui a plu.

mardi 5

Ma fille était très fière d'avoir réussi à faire deux présentations alors que quelques camarades de classe n'étaient pas prêts à passer.

Nous avons commencé à travailler sur un maillot-robe pour ma fille. Il n'y avait pas de patron de couture pour son dessin, donc j'ai fait un prototype à partir des chutes de tissu de sa longue robe de bain d'il y a quelques années.

À mon grand soulagement, le virement bancaire a réussi. Il paraît que Wise peut m'aider à virer l'argent des Philippines au Canada.

mercredi 6

Mon mari, ma fille, et moi sommes allés chez la cardiologue, qui était très loin : à presque deux heures de métro et de bus pour le trajet aller. Ma fille s'ennuyait beaucoup, mais elle voulait traiter ses palpitations, donc elle a fait l'effort. Après cela, nous avons acheté des récompenses au supermarché à proximité. Elle a choisi une petite bouteille de yaourt à boire.

J'ai emmené ma fille et son amie au parc pour jouer. Il y avait un garçon qui les embêtait et qui était trop jeune pour qu'on puisse le raisonner, alors j'ai dû utiliser ma Voix de Maman pour qu'il arrête.

jeudi 7

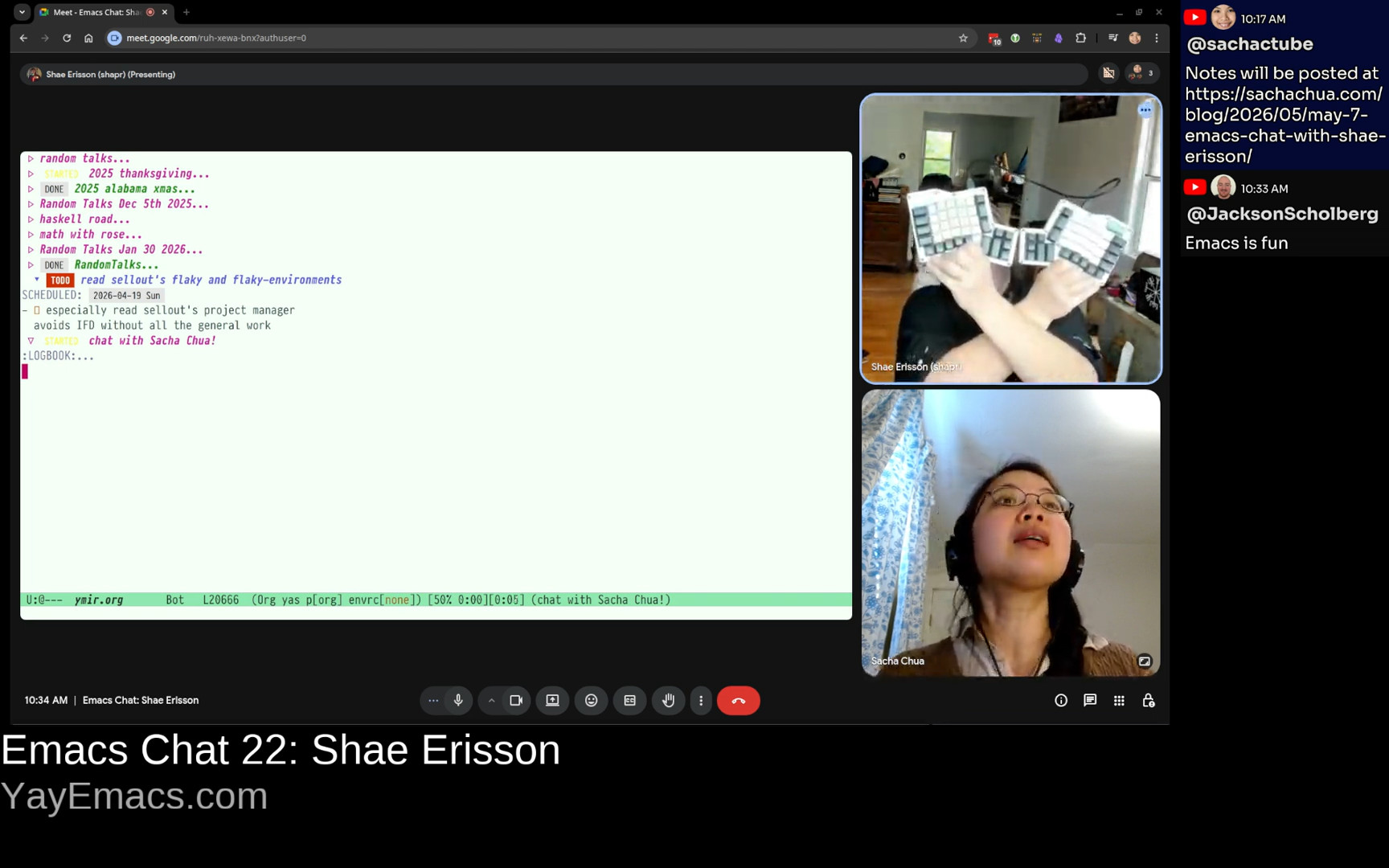

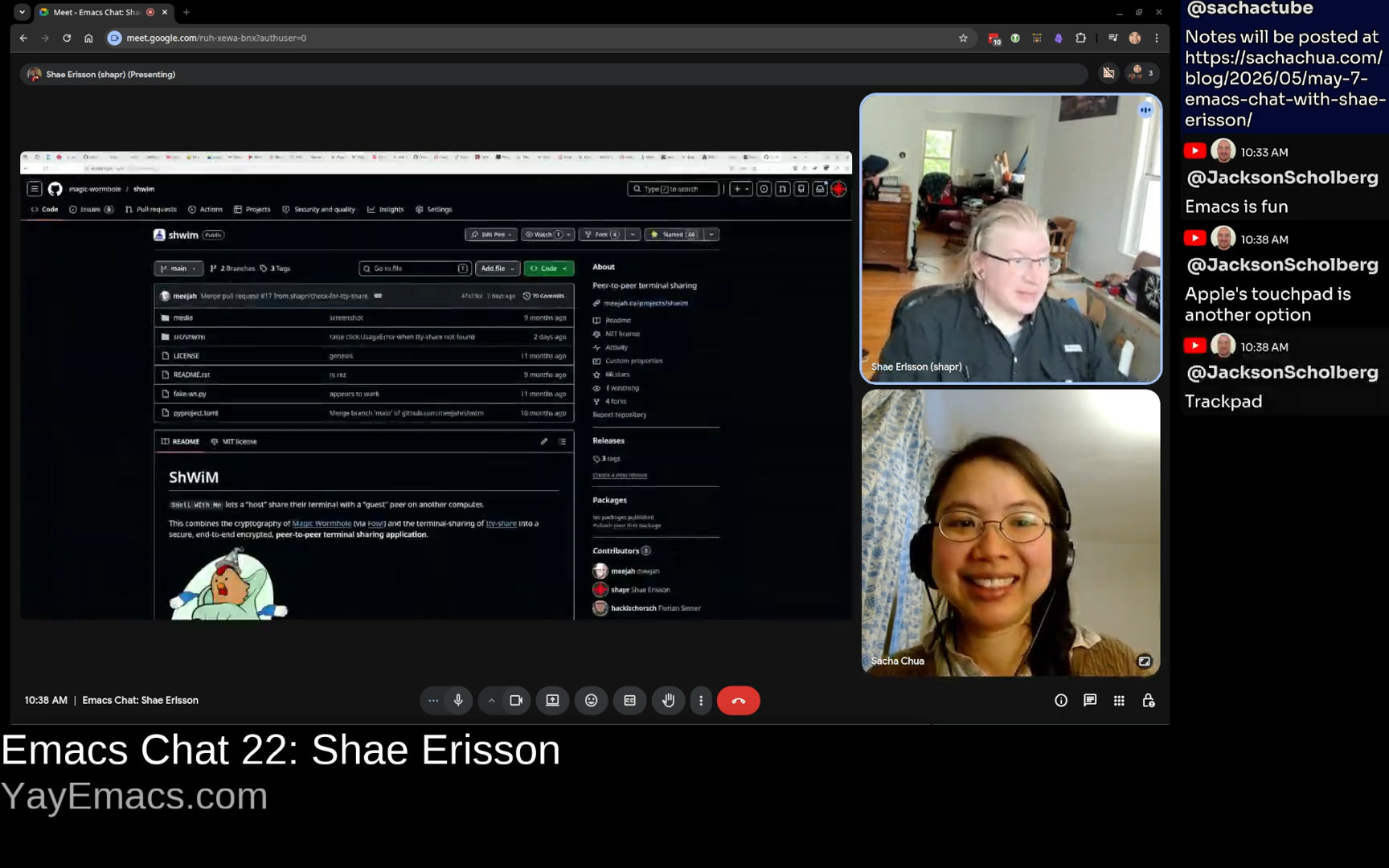

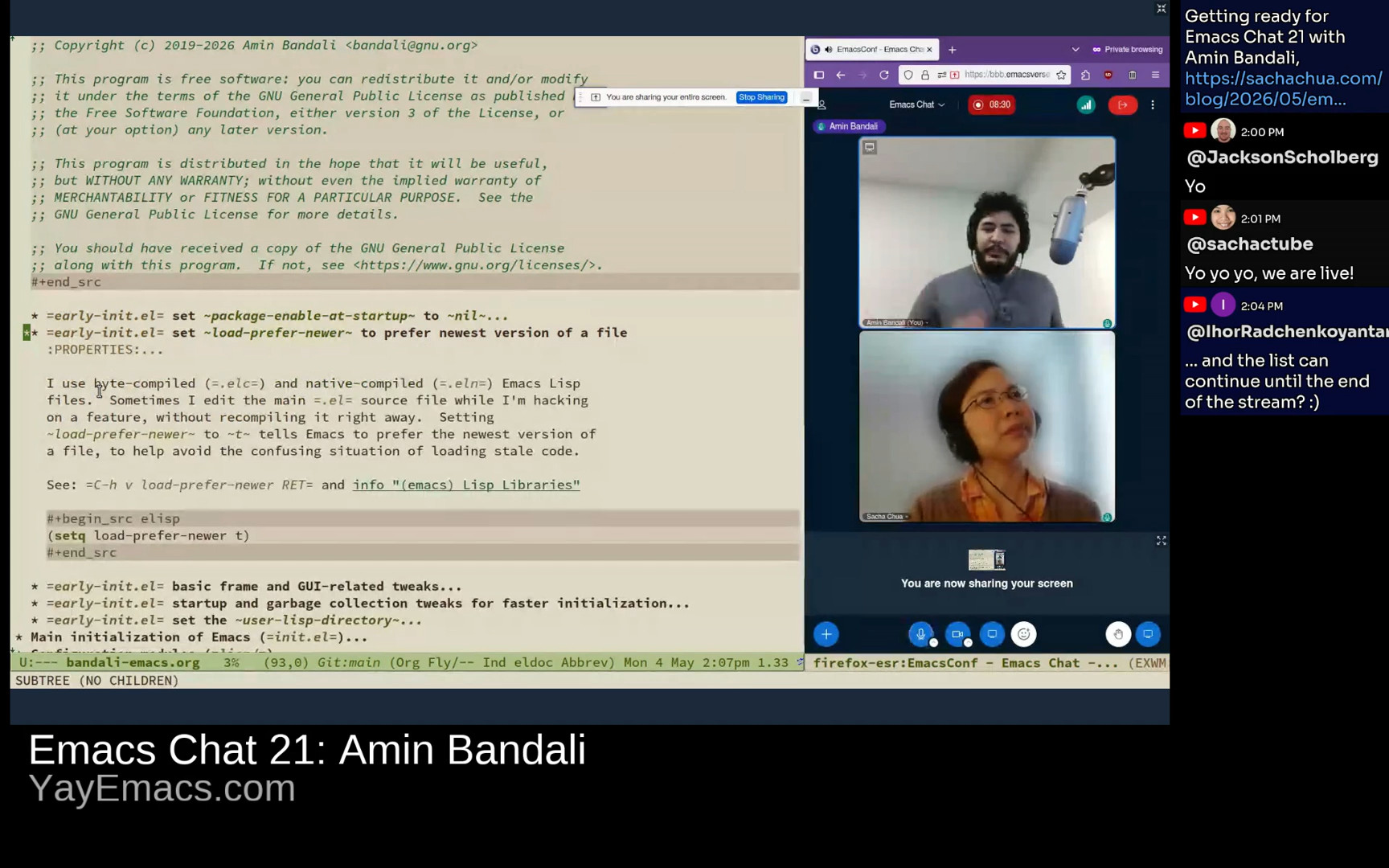

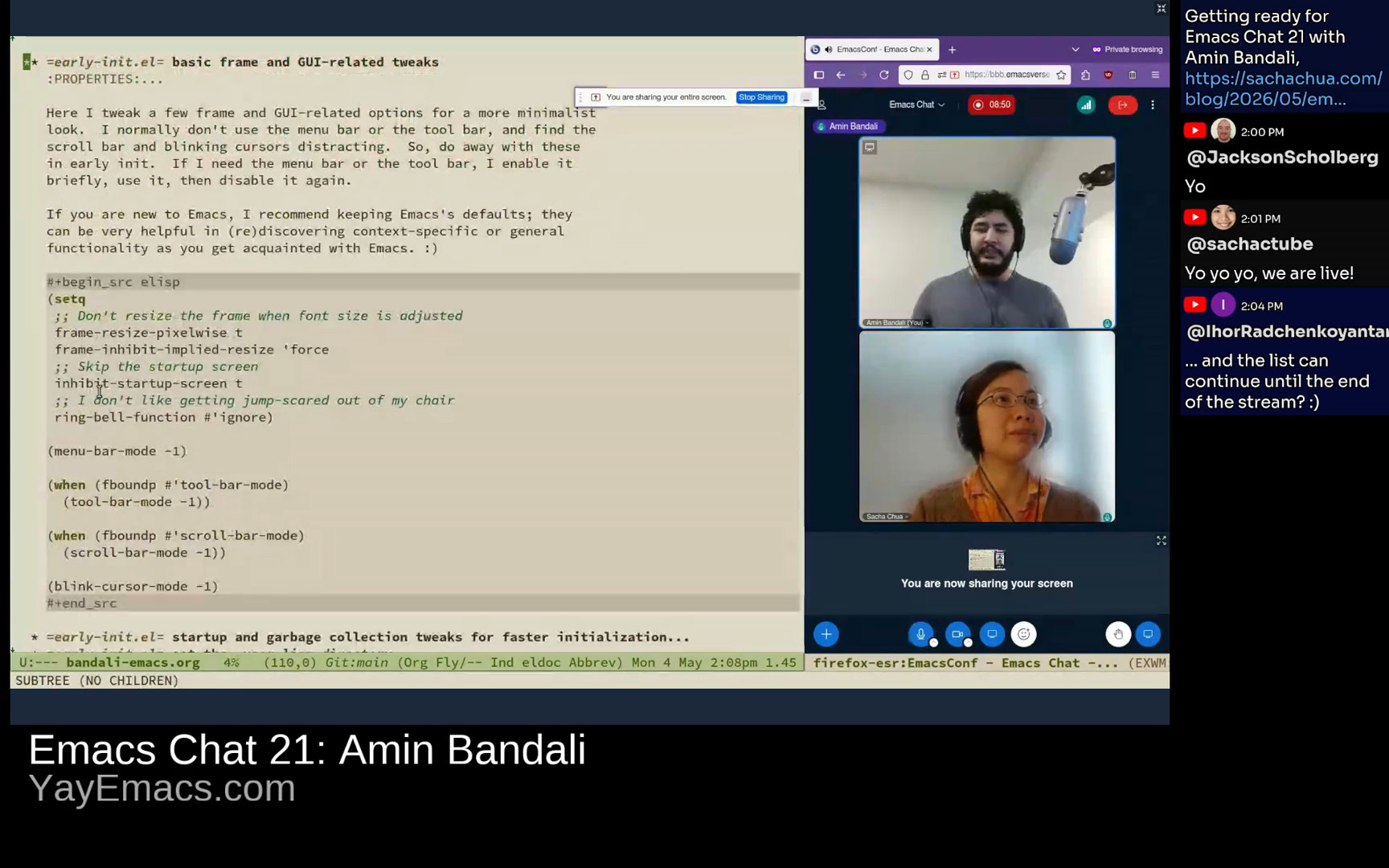

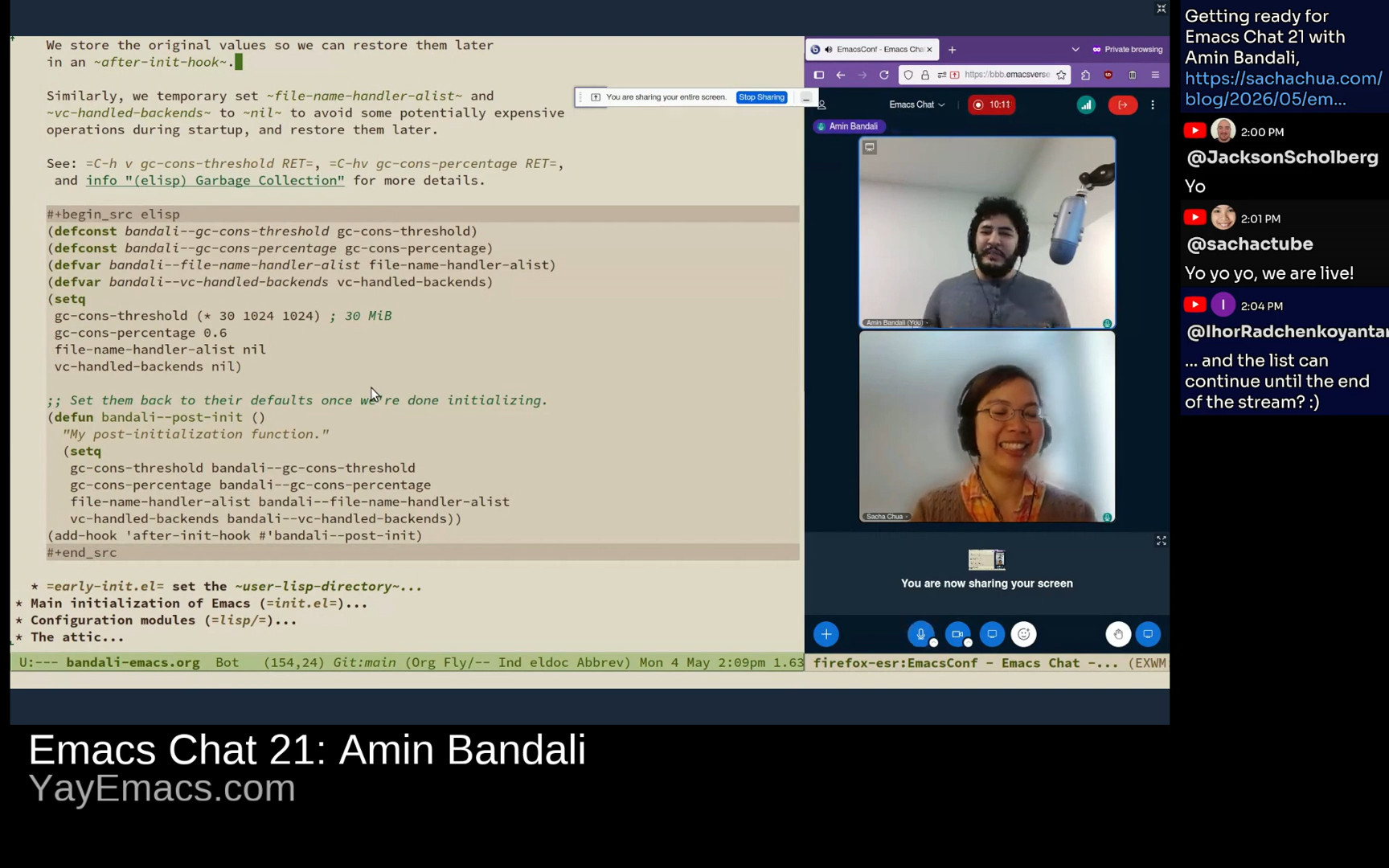

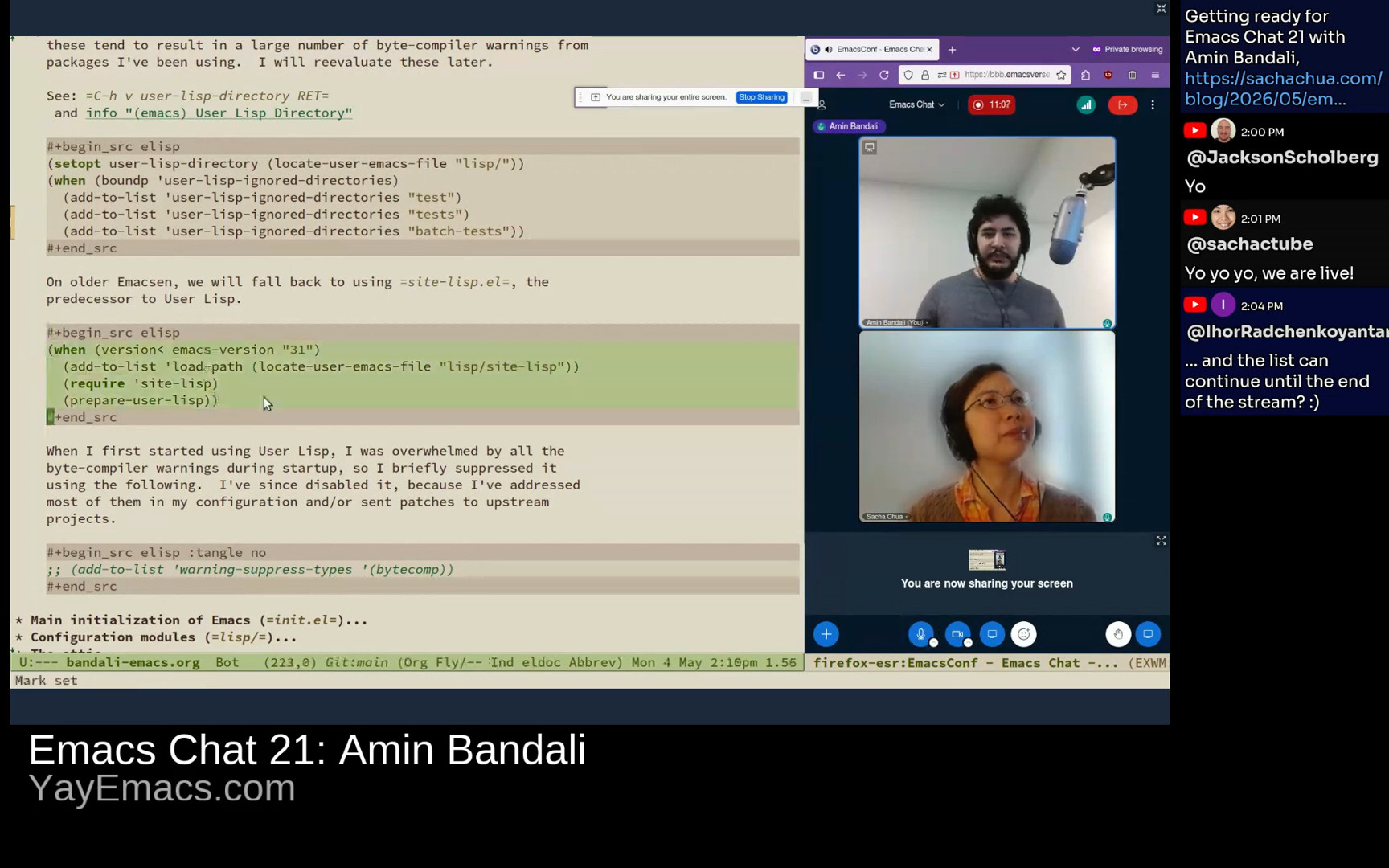

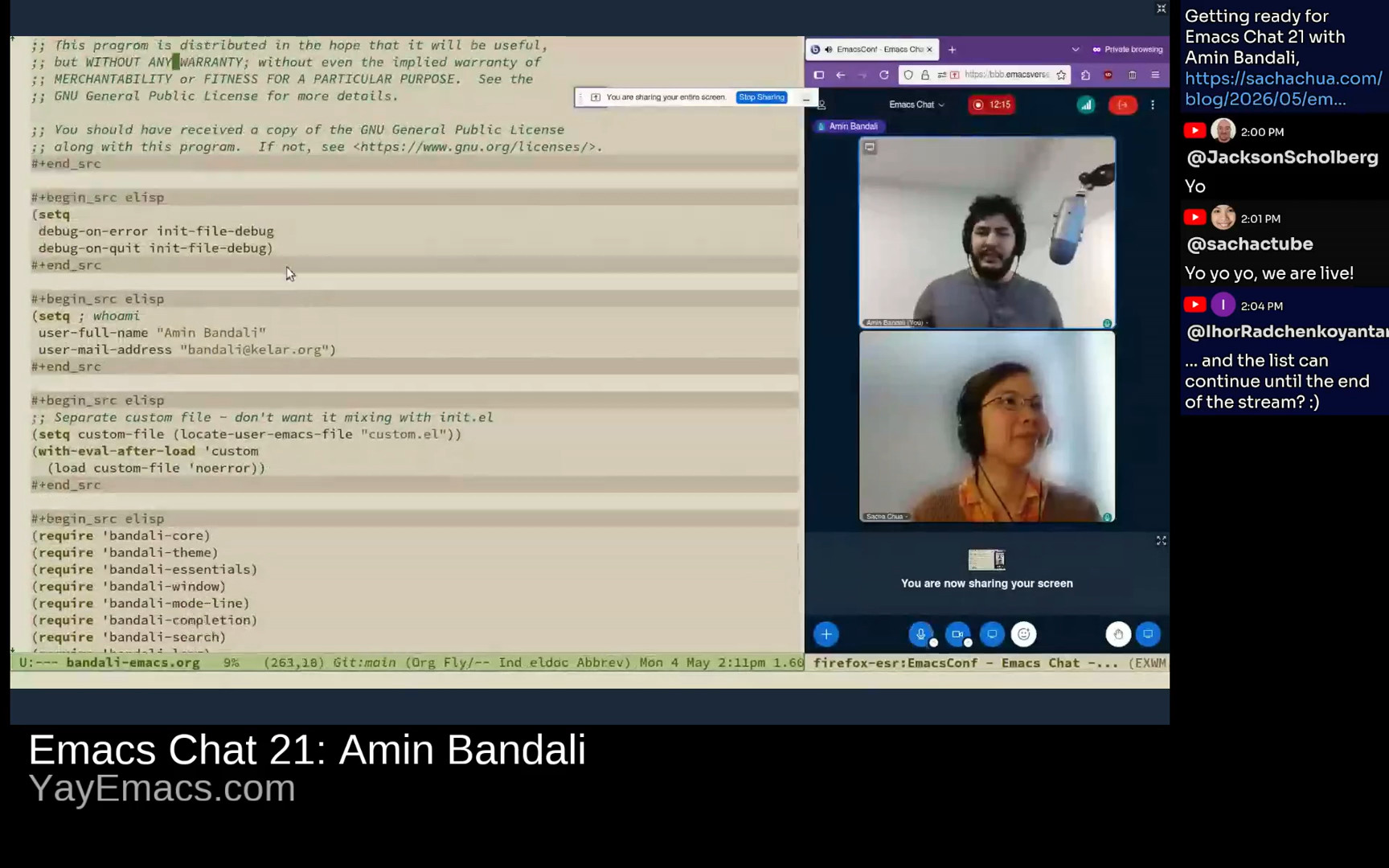

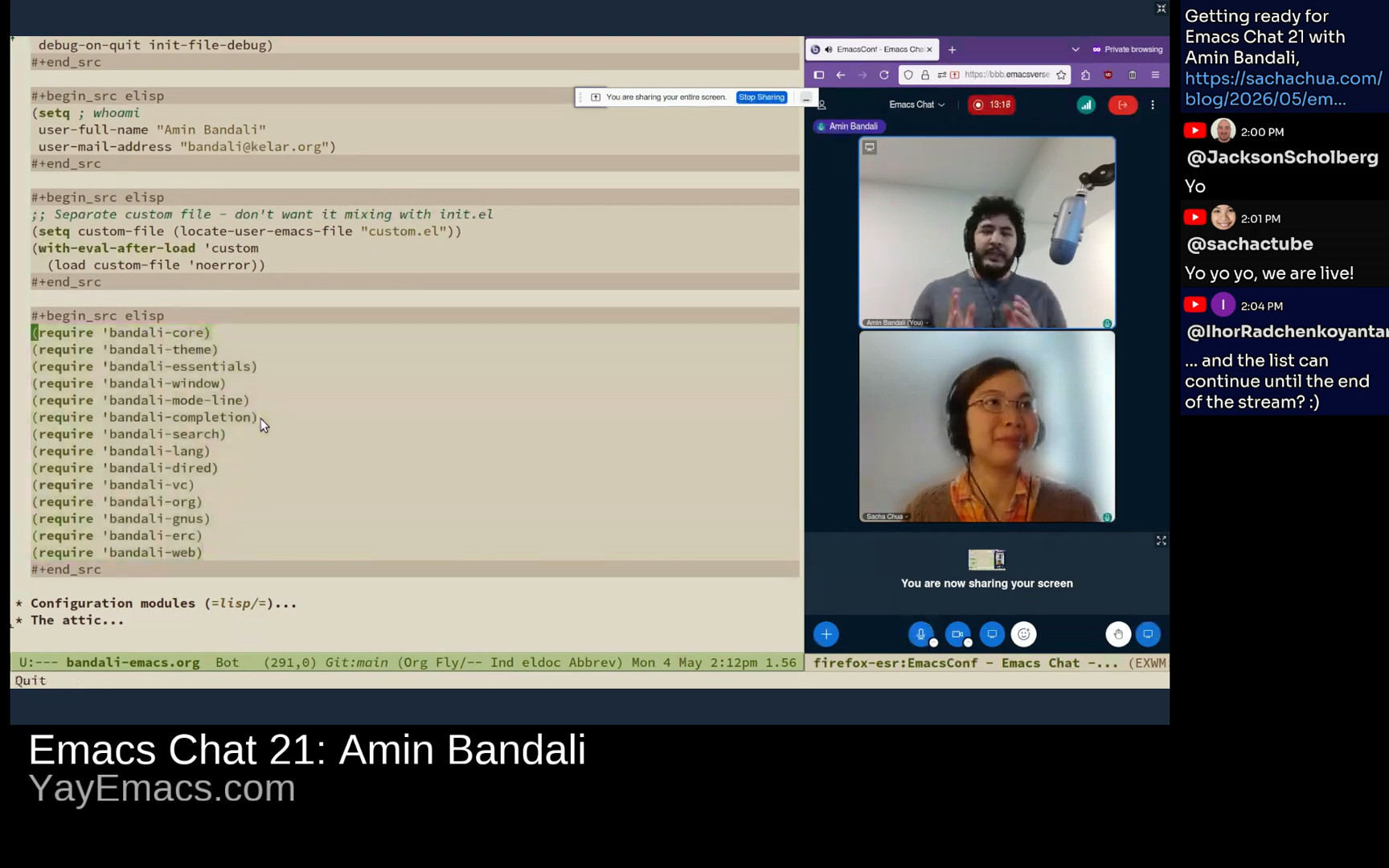

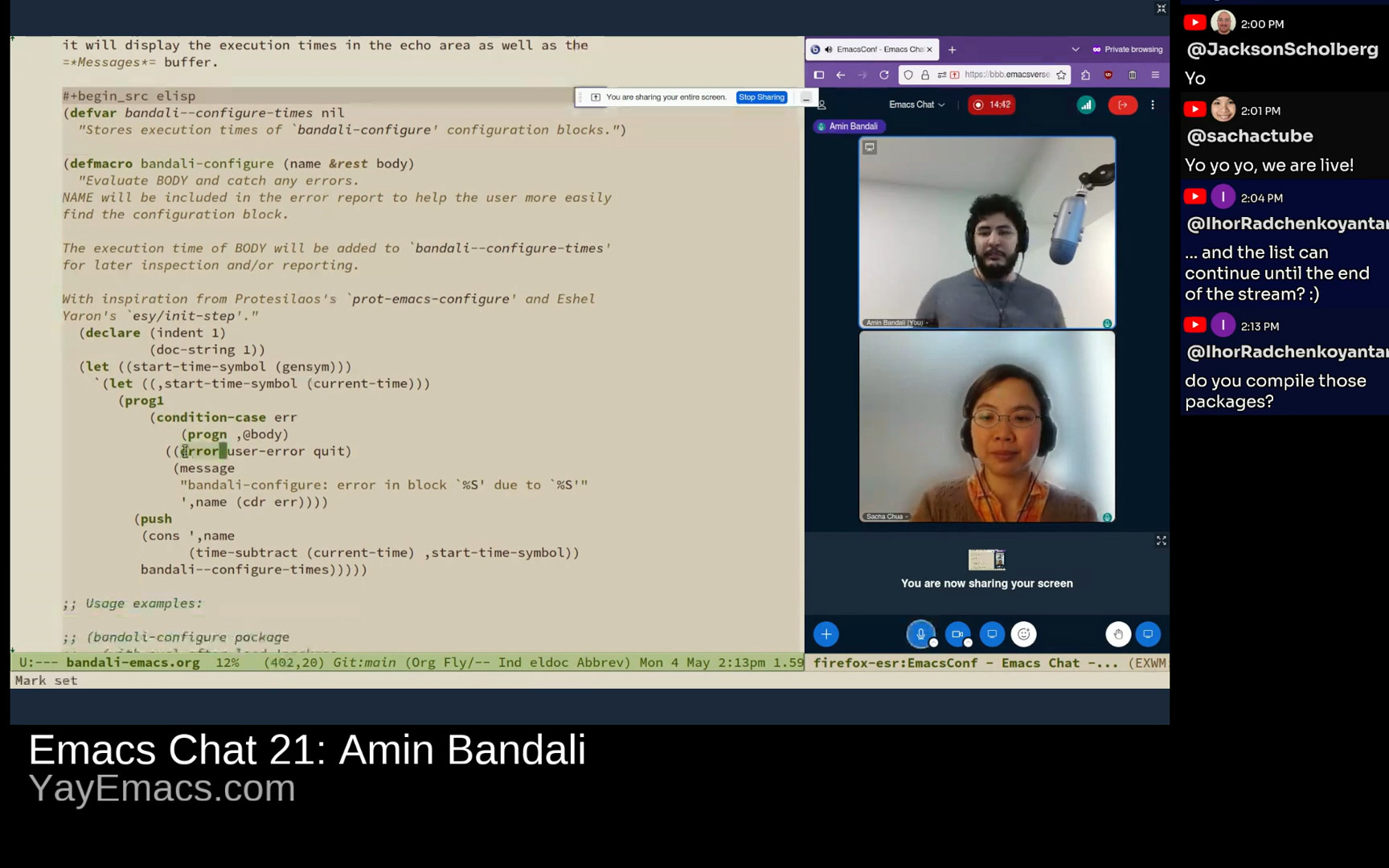

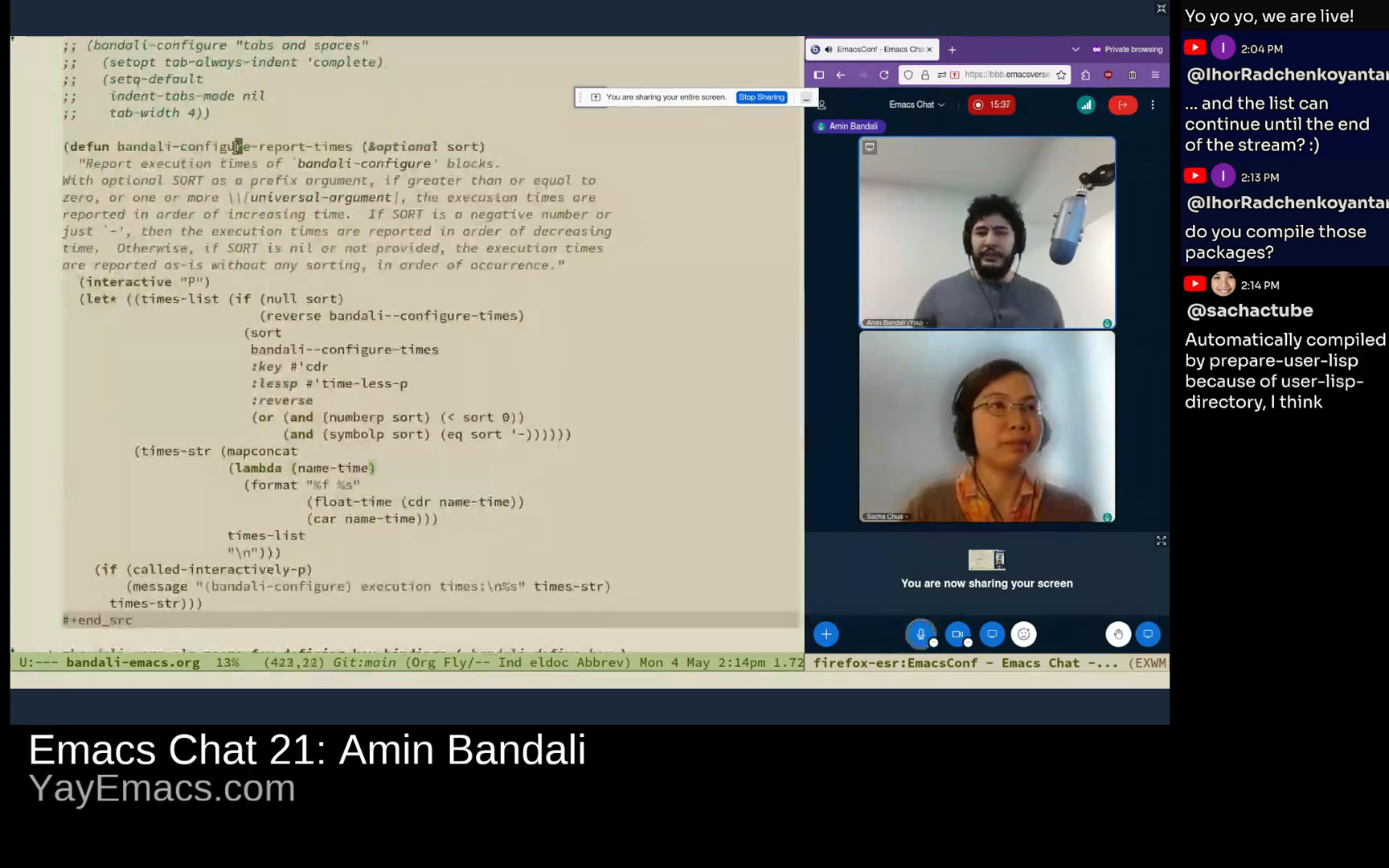

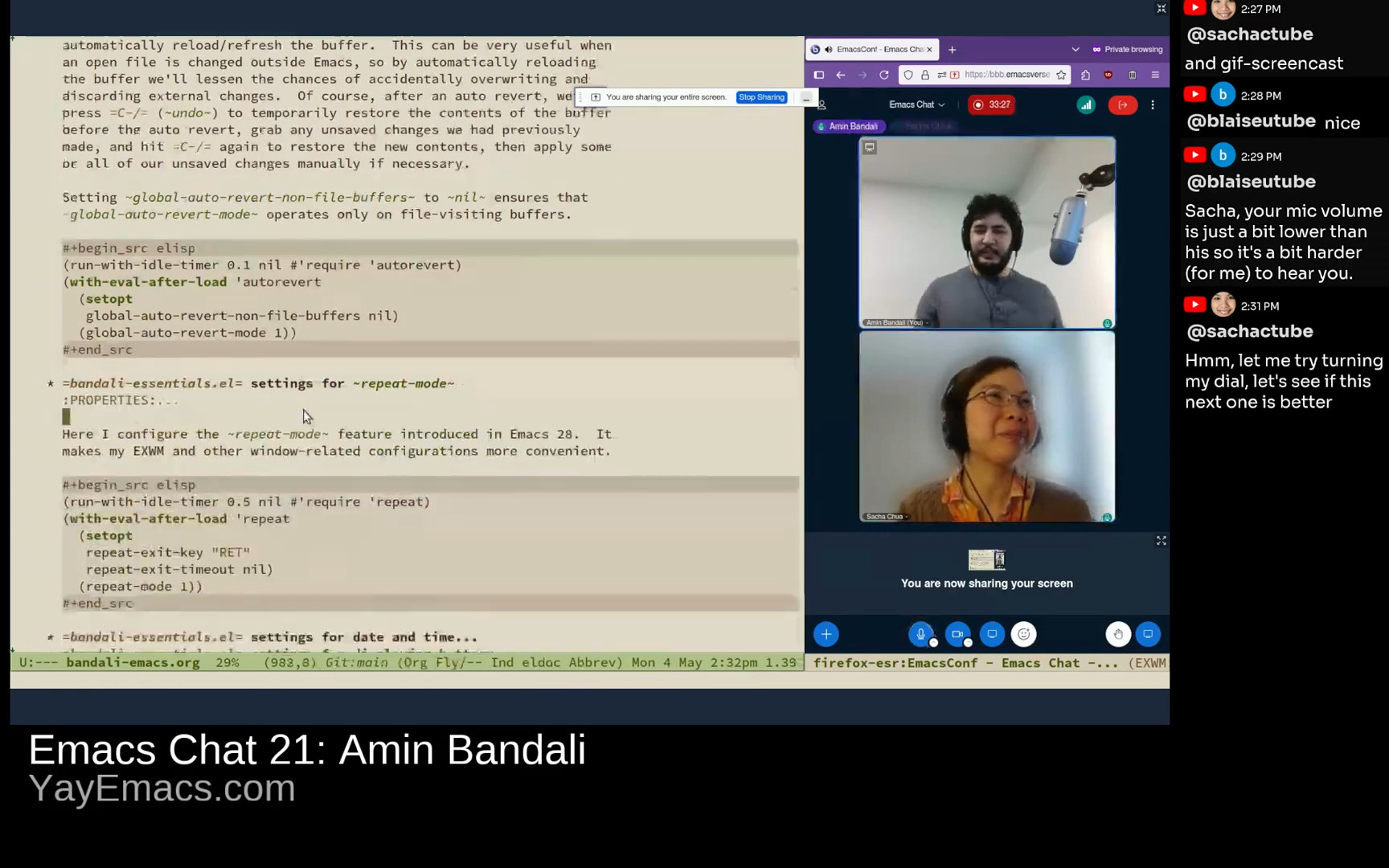

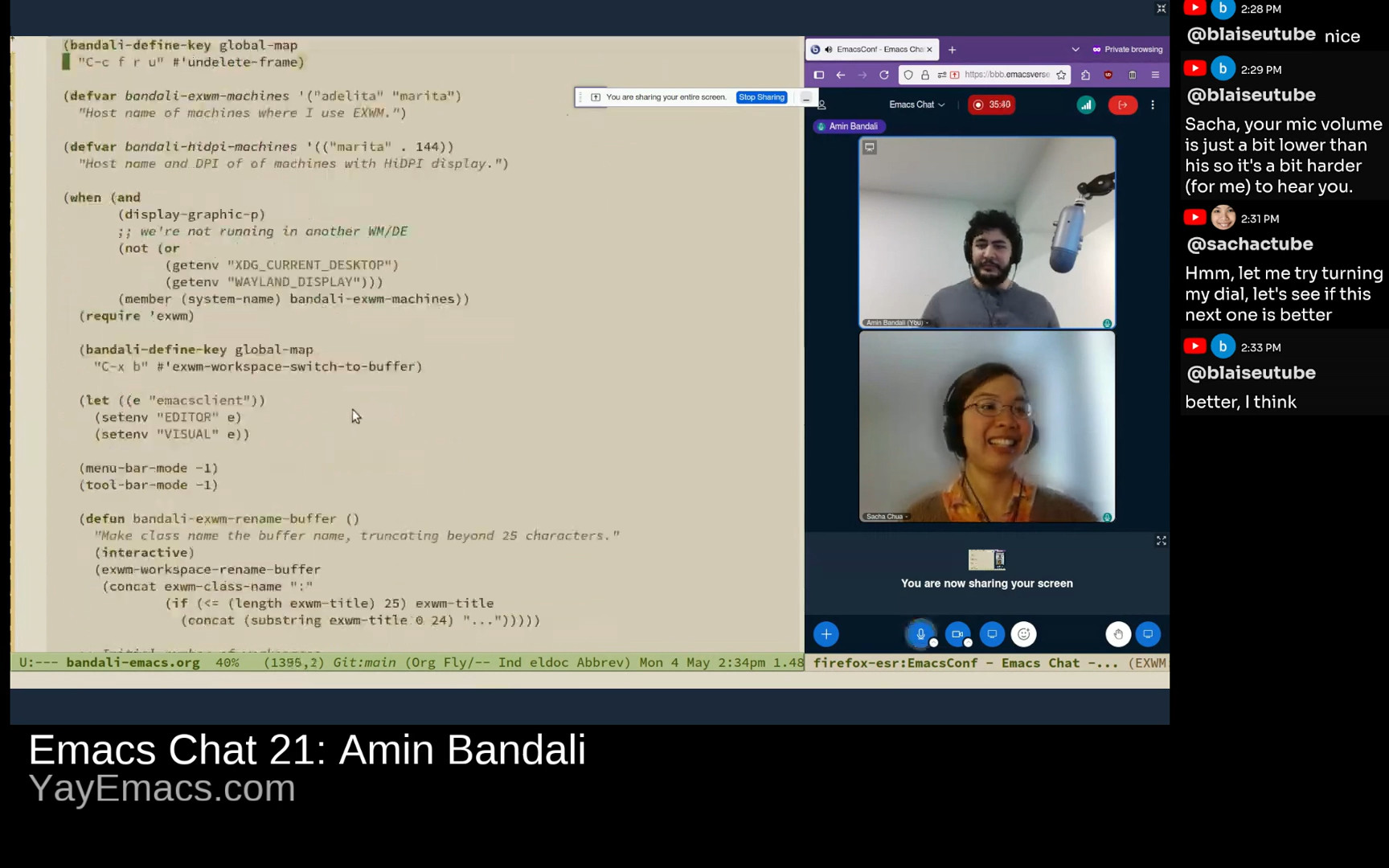

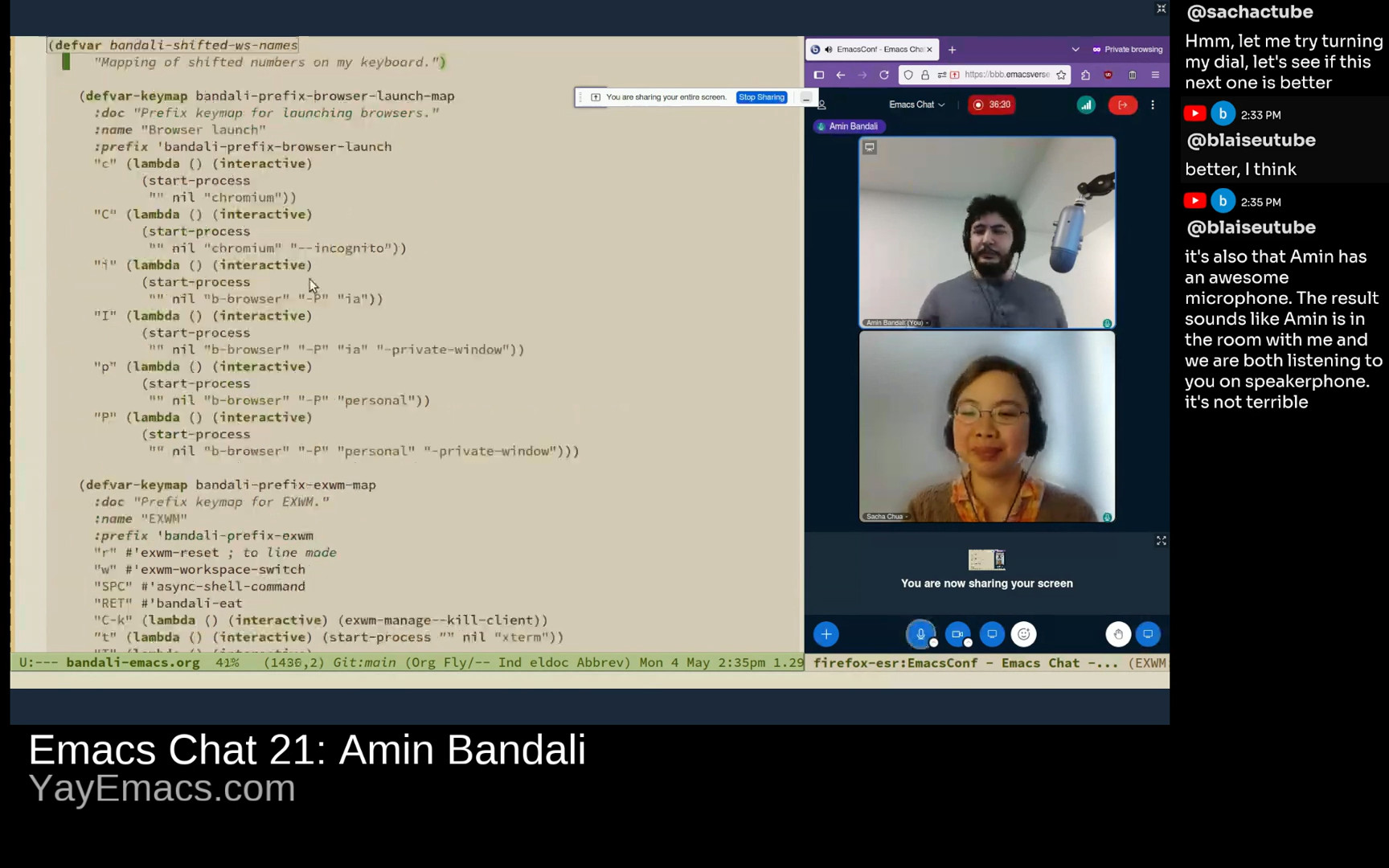

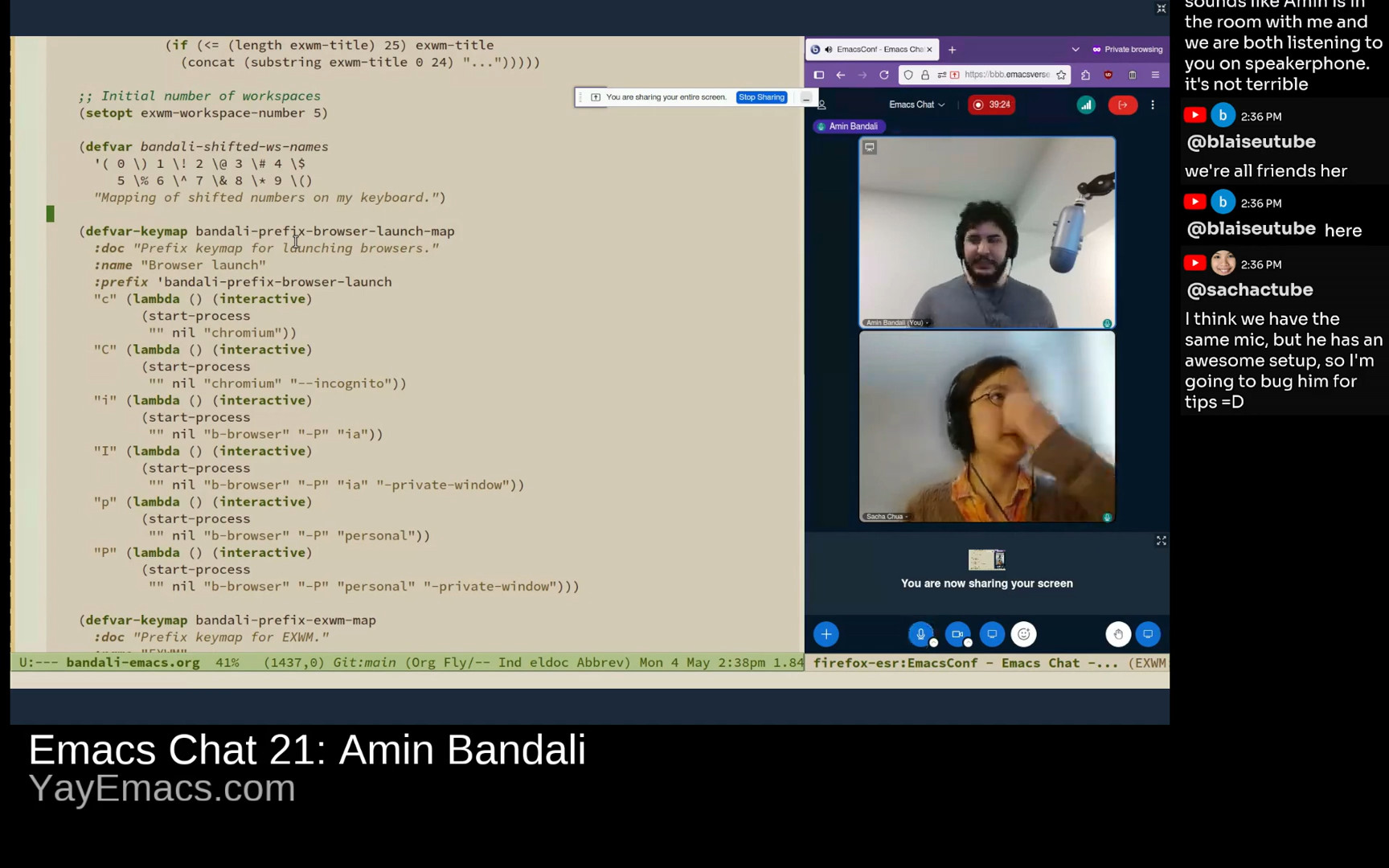

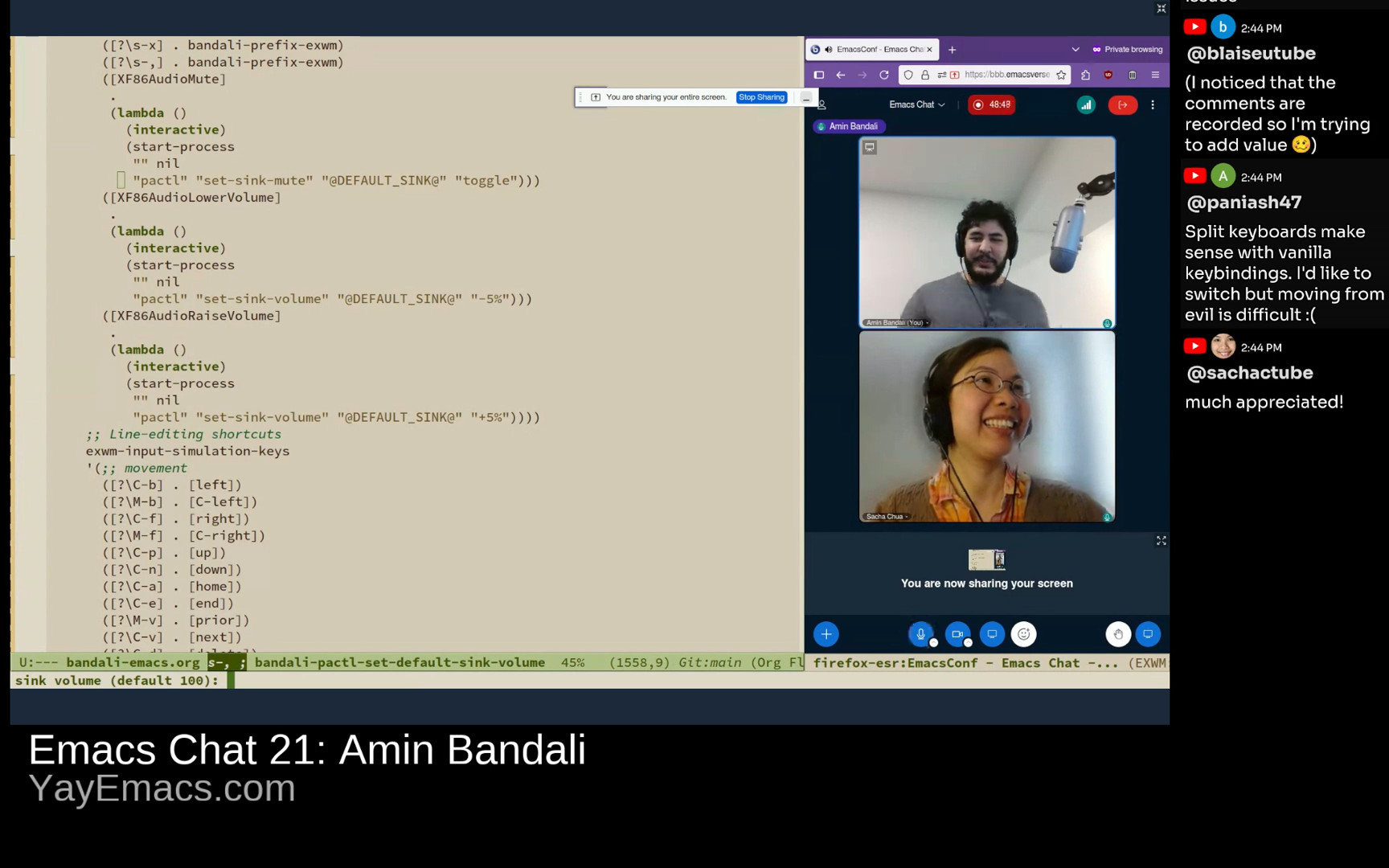

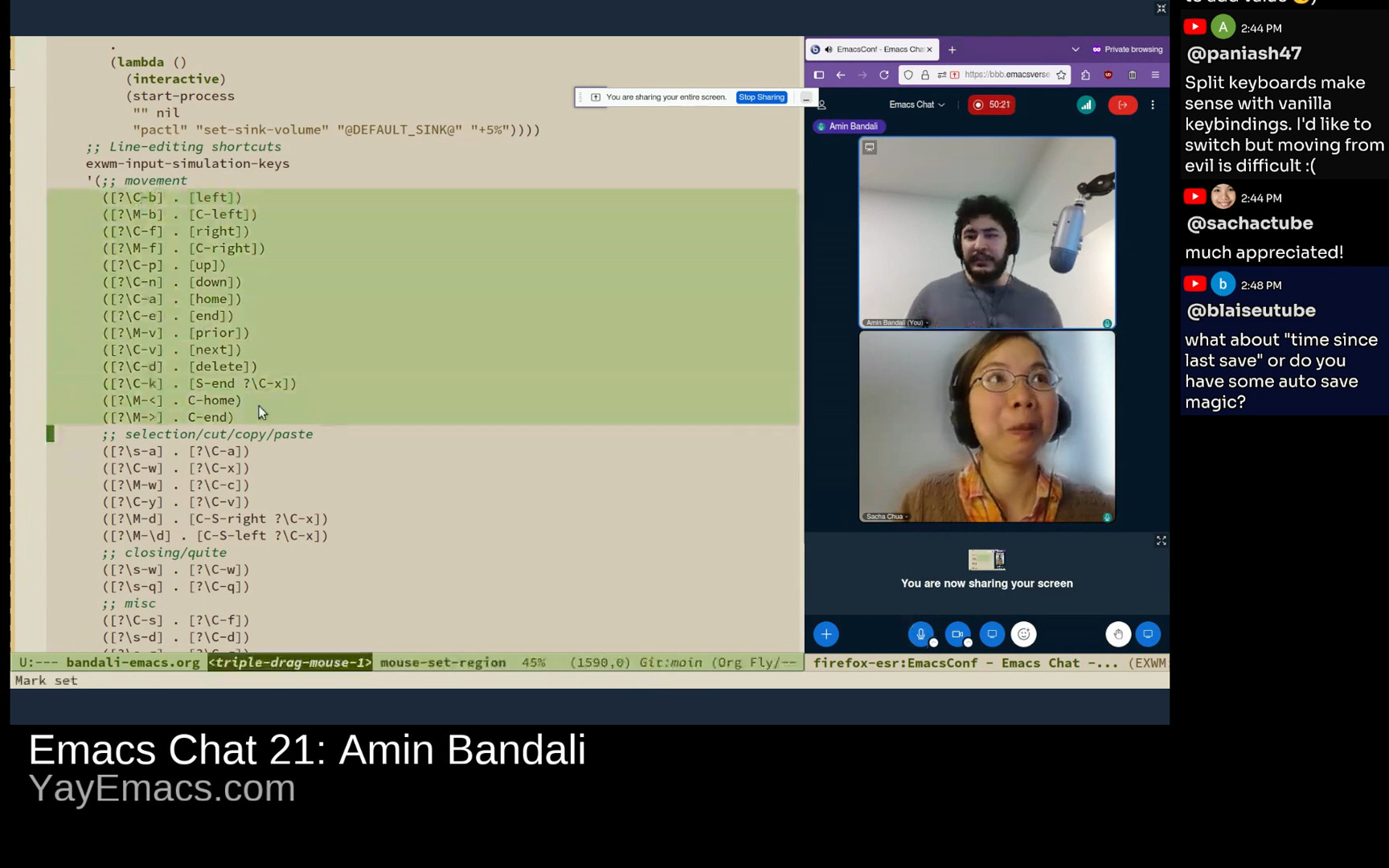

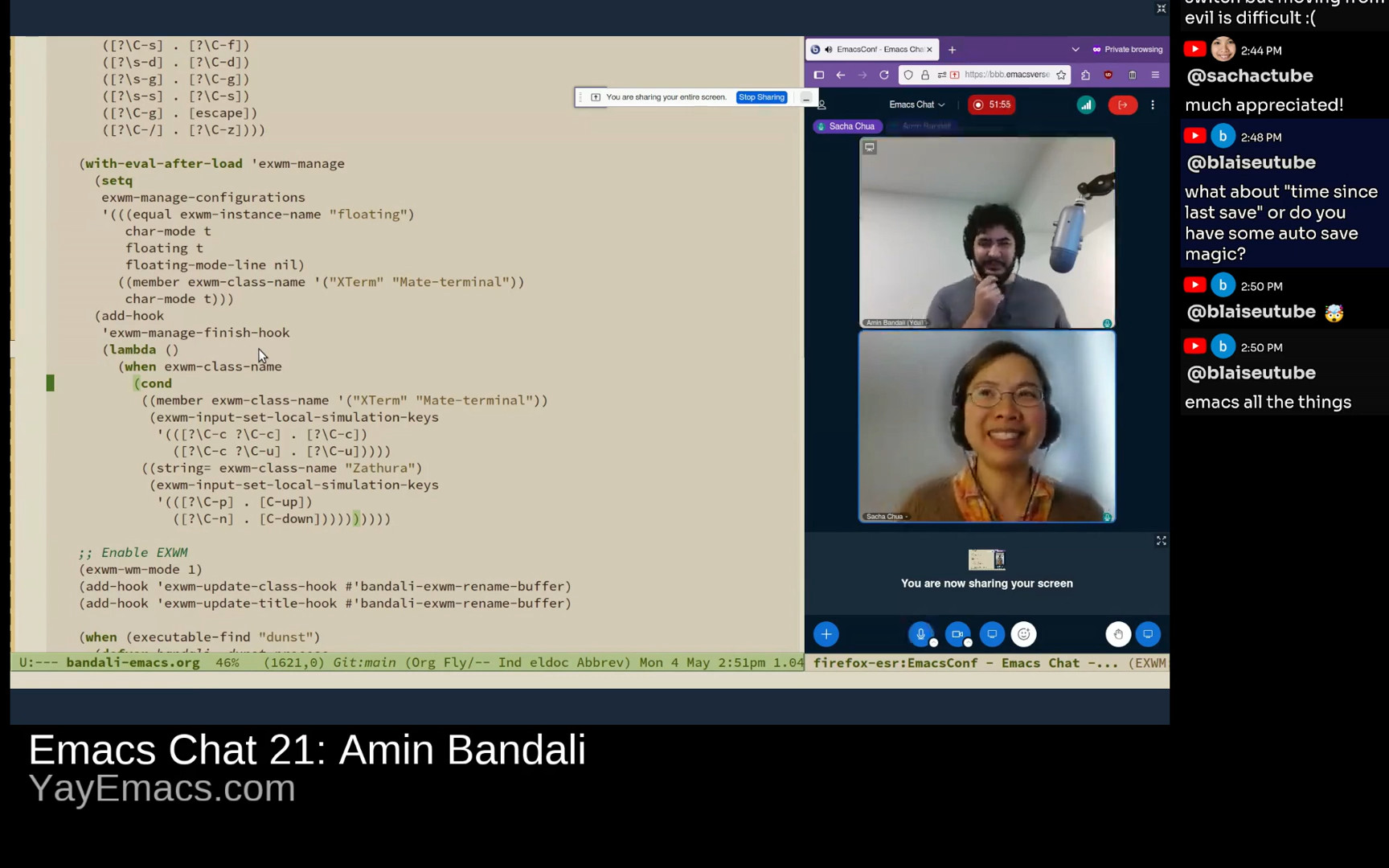

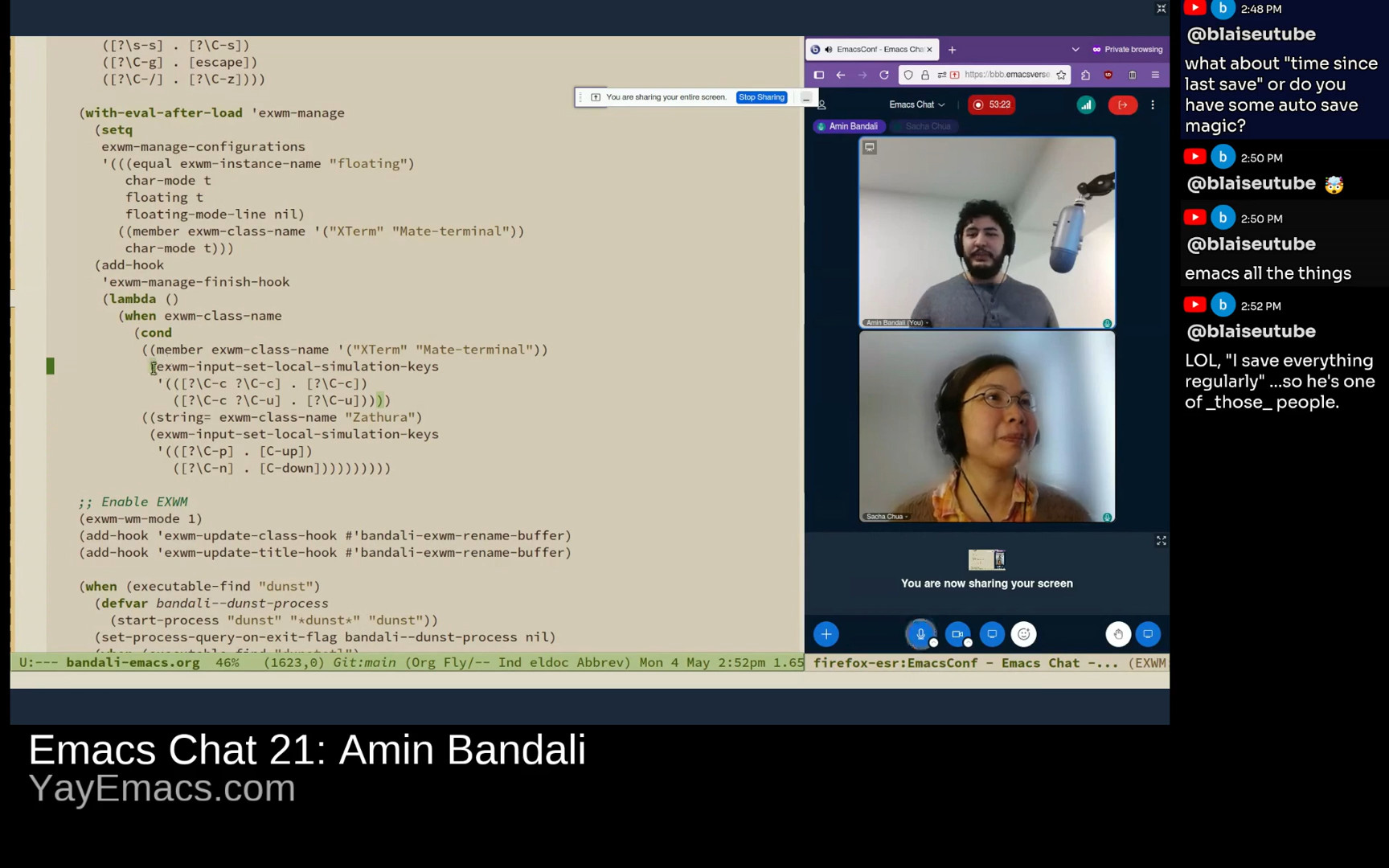

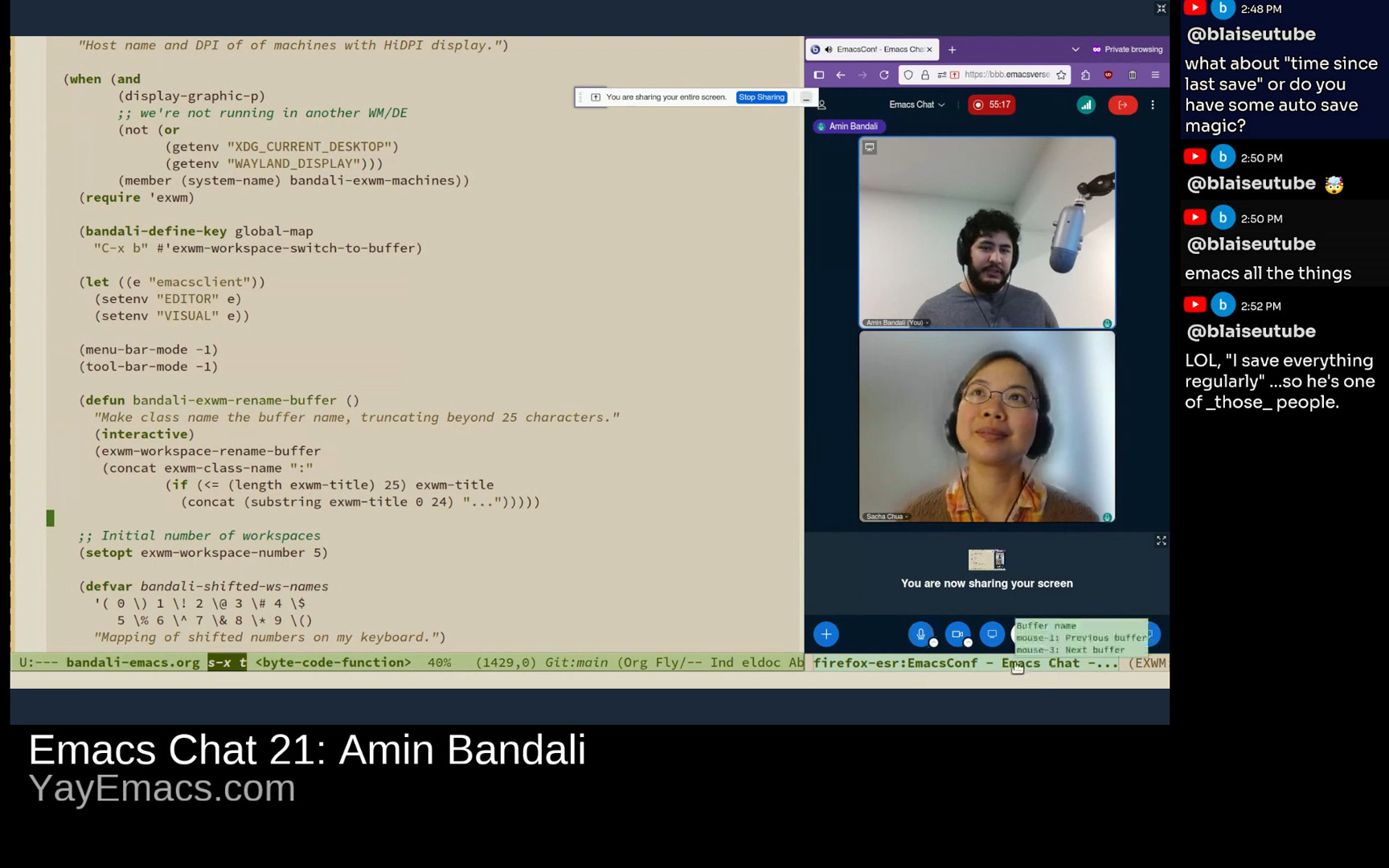

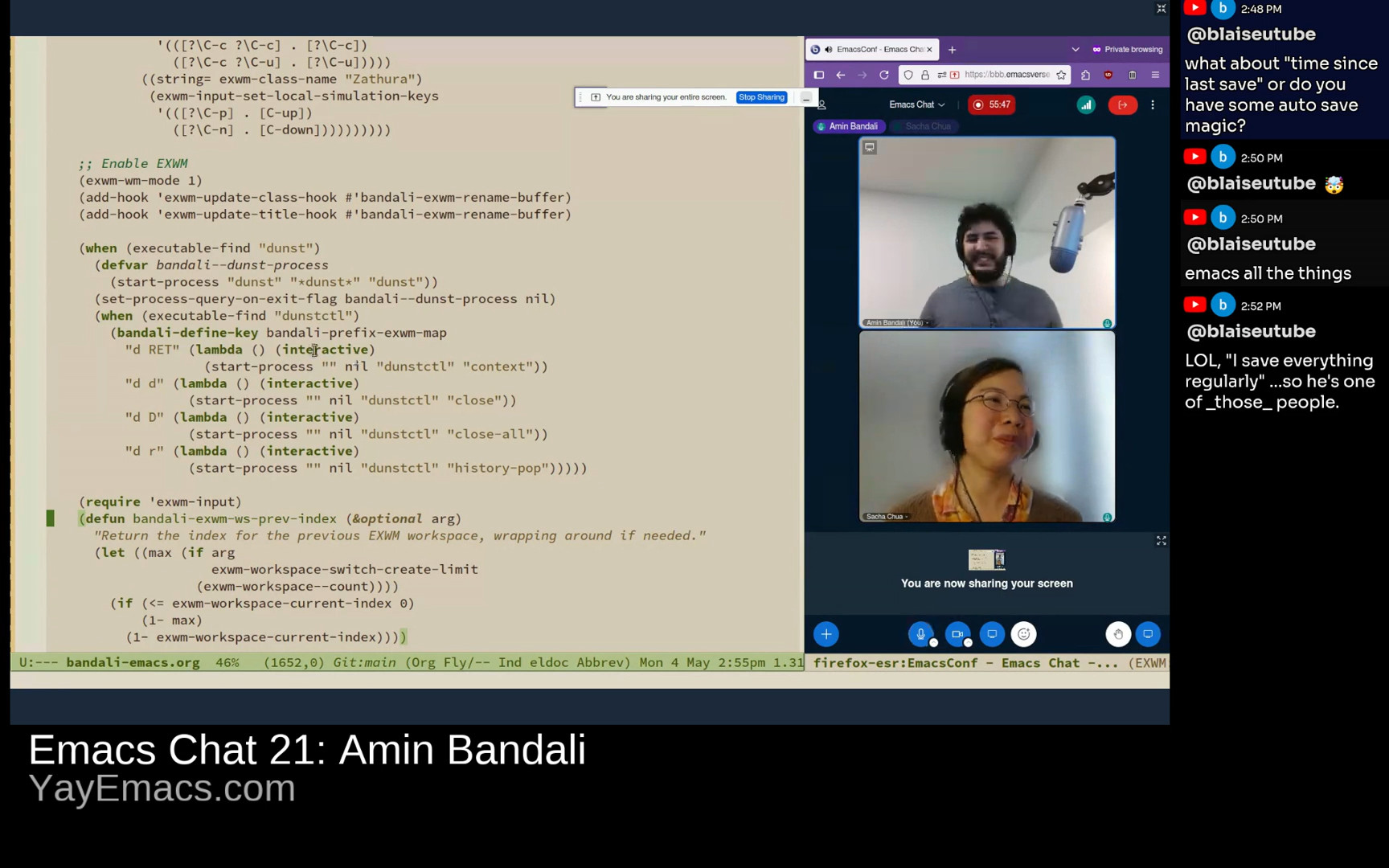

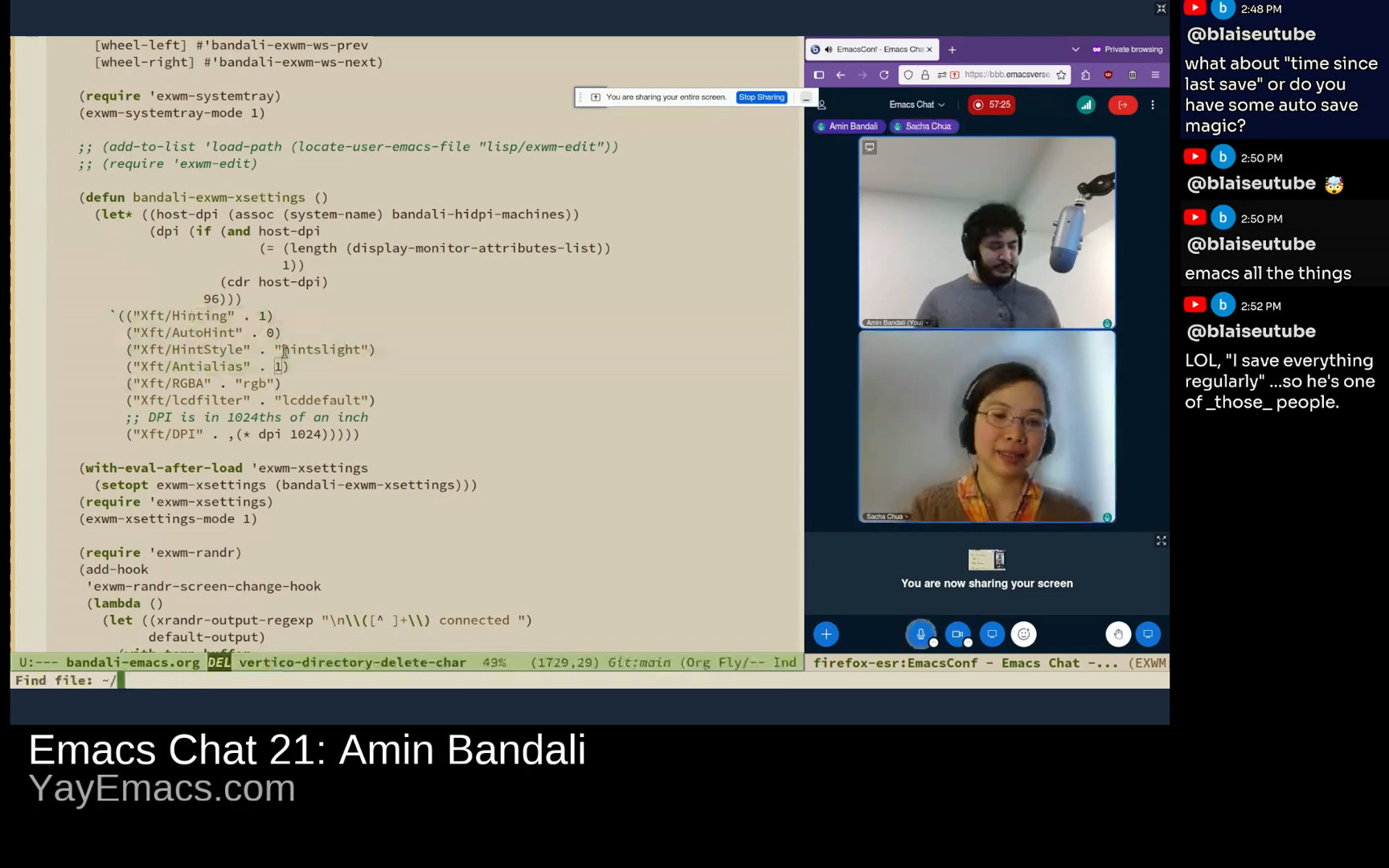

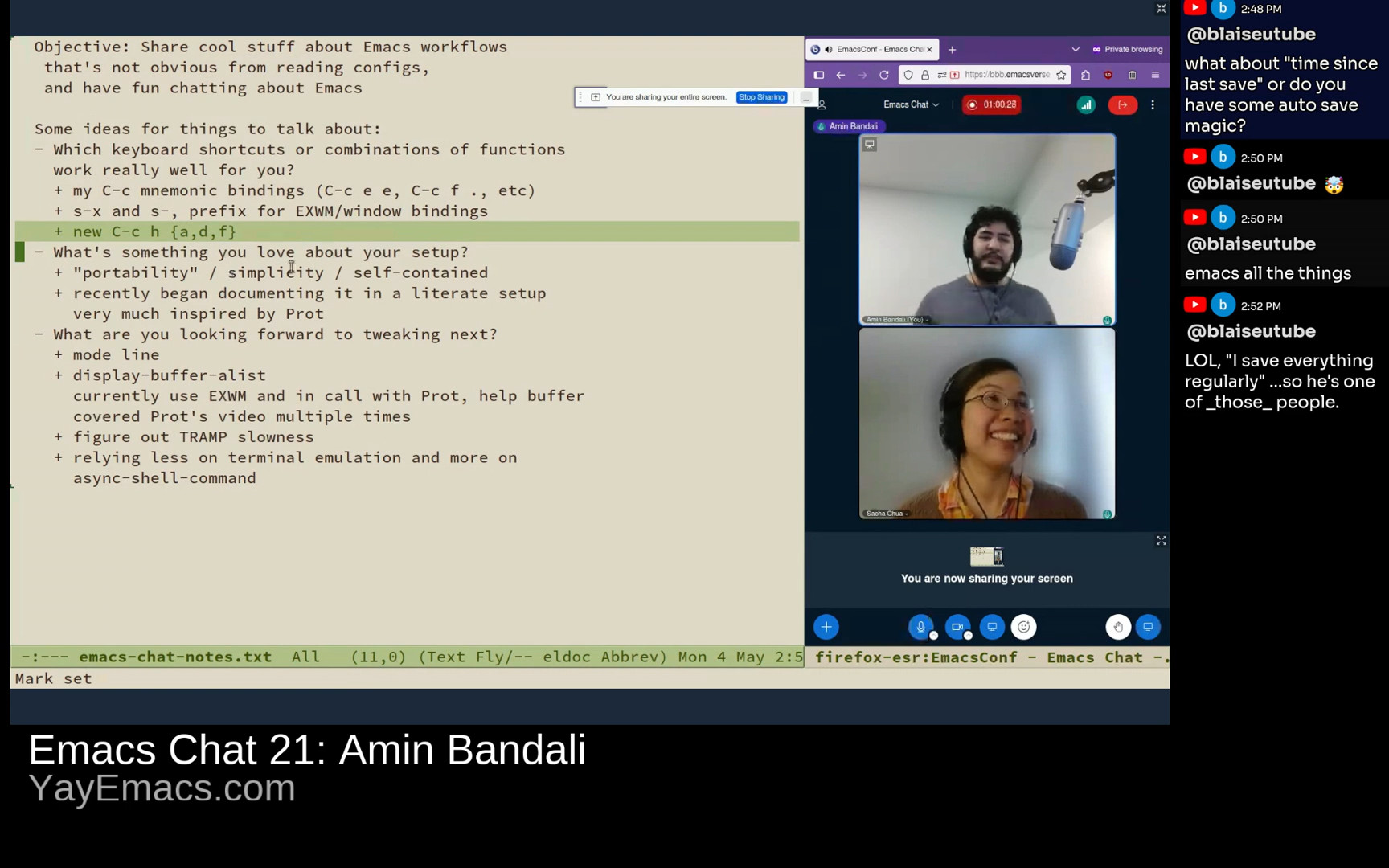

J'ai été ravie de discuter d'Emacs avec Shae Erisson, qui a une expérience intéressante avec les claviers et la programmation sur Emacs.

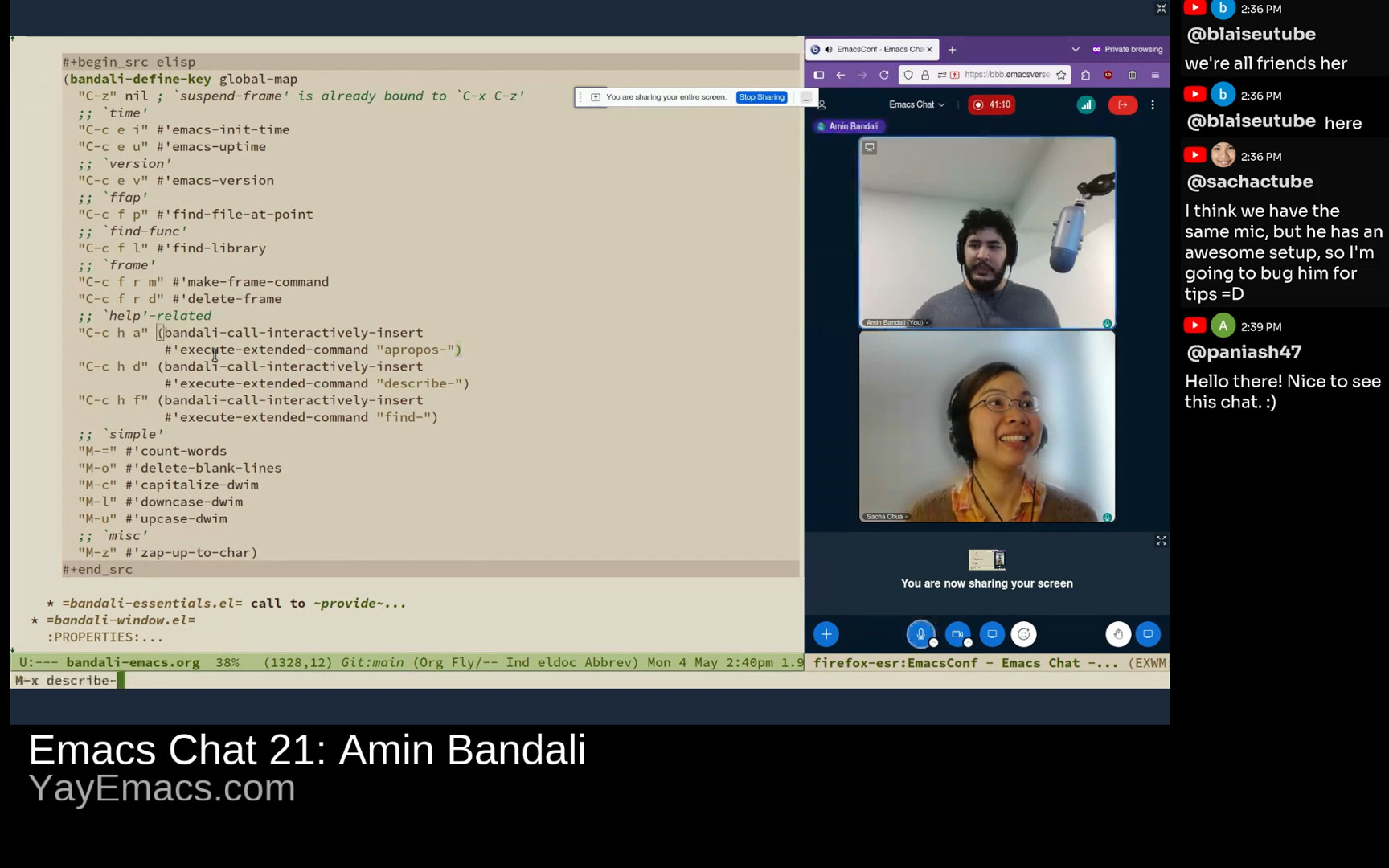

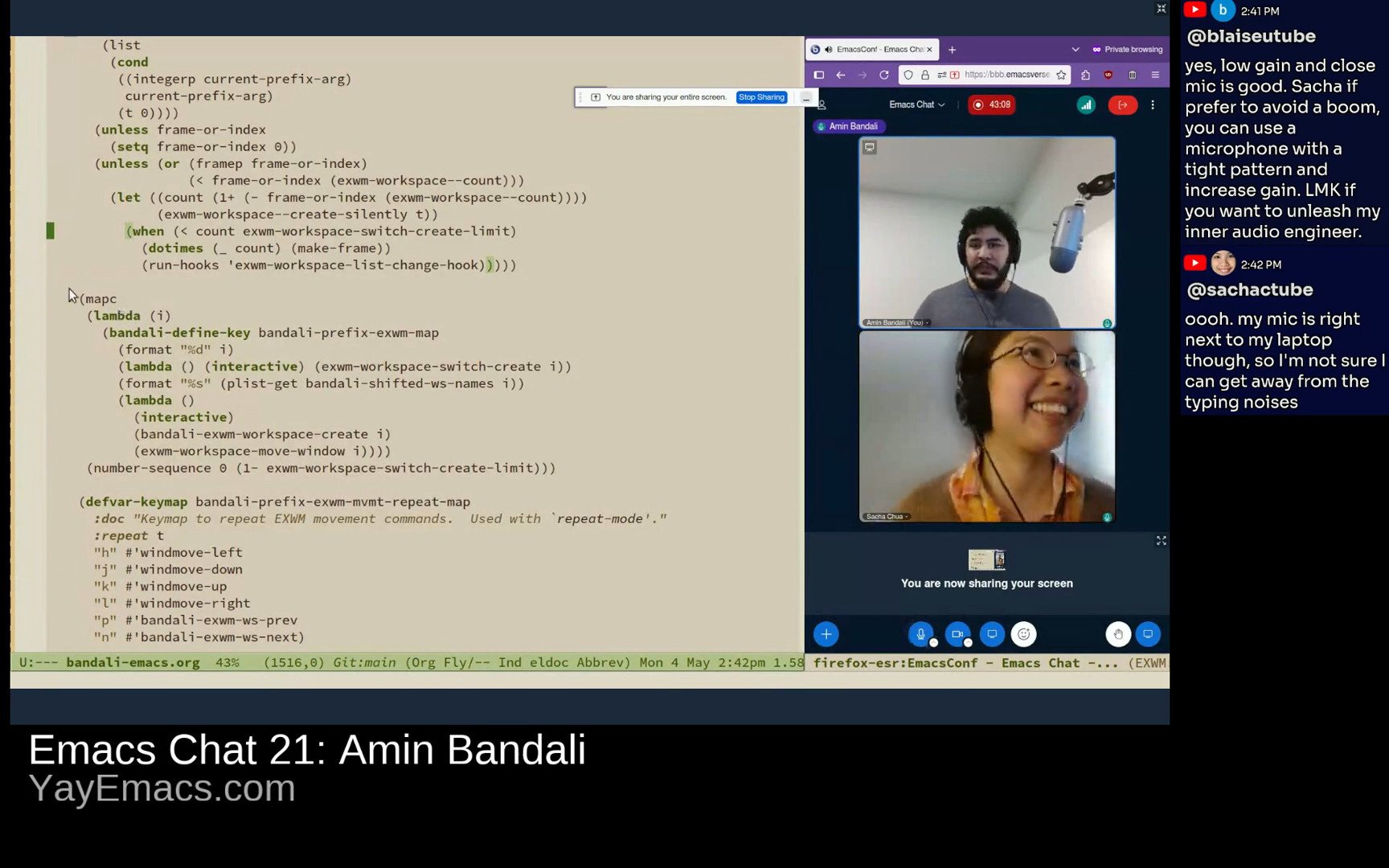

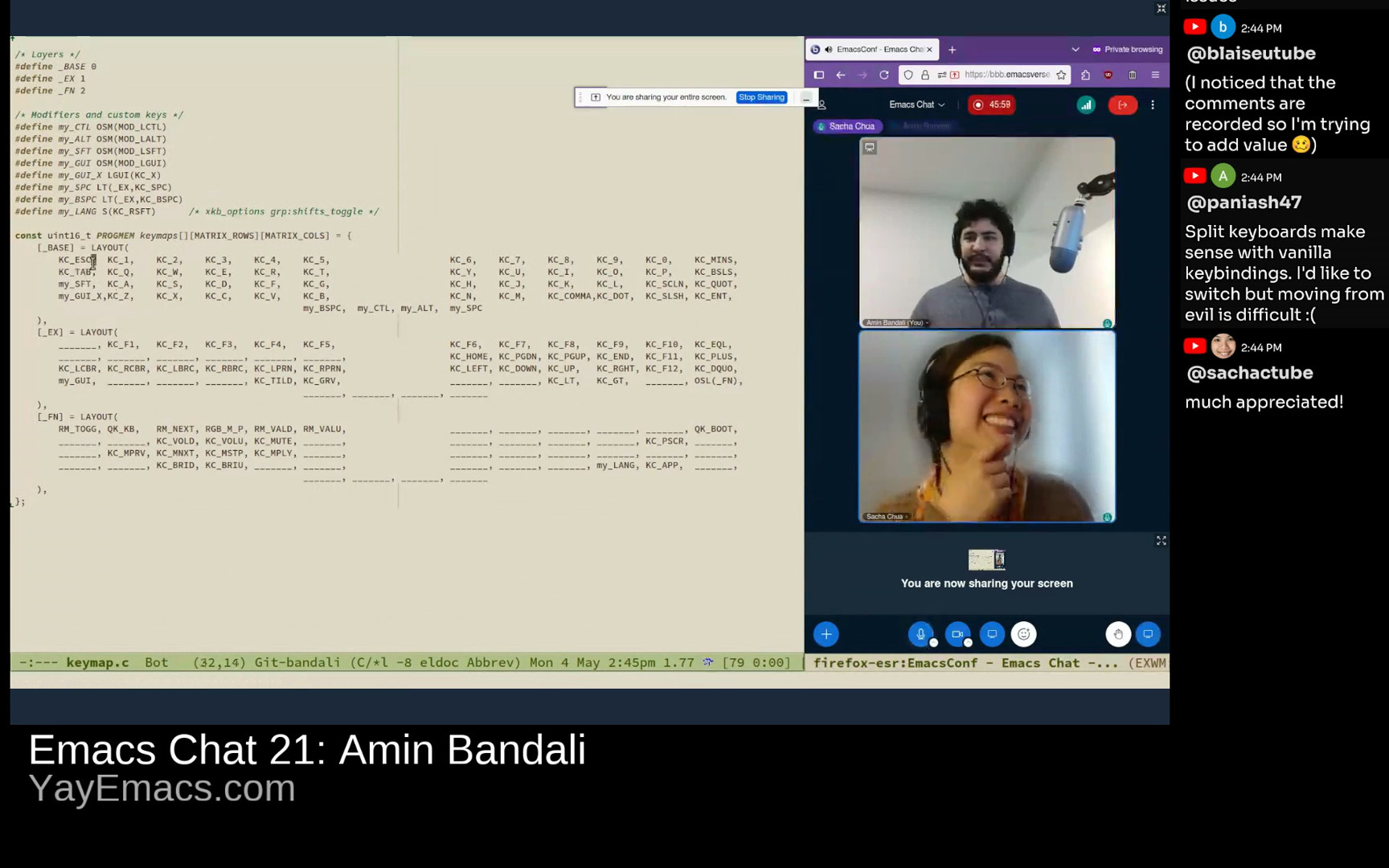

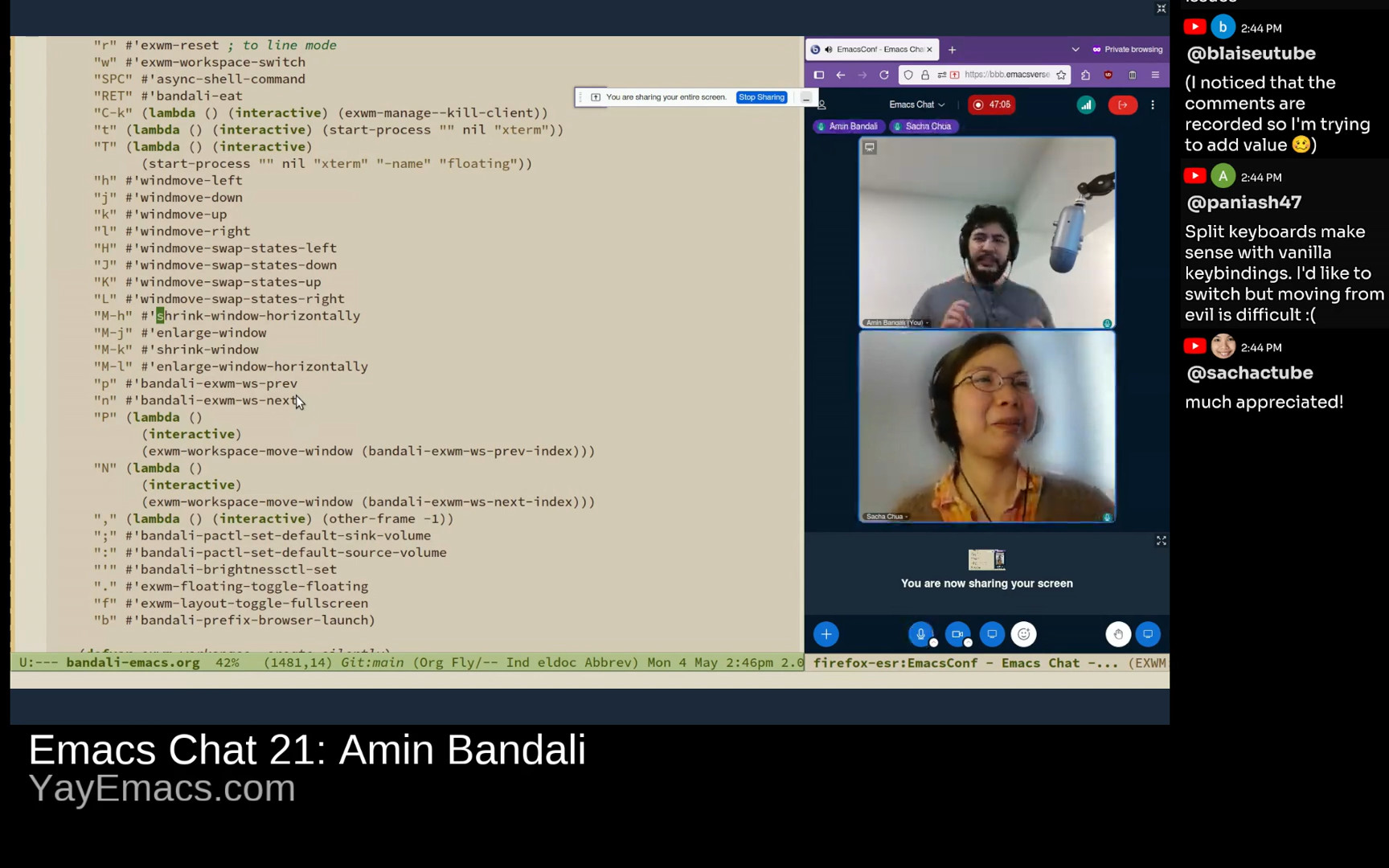

J'ai travaillé sur la revue des captures d'écran de ma conversation avec John Wiegley et Karthik Chikmagalur. J'ai écrit des fonctions pour identifier les rectangles grâce à l'outil Tesseract OCR. J'ai aussi utilisé les expressions régulières pour masquer des coordonnées GPS et d'autres secrets.

Je suis allée chez une nouvelle hygiéniste pour un nettoyage. J'étais ravie que la réceptionniste et l'hygiéniste aient porté des masques N95 et que la salle de traitement ait une porte fermée.

J'ai discuté des finances de ma mère avec la responsable du studio. J'ai dû m'en occuper parce que ma mère n'est pas capable de gérer ses finances elle-même.

vendredi 8

Je viens de commencer à regarder Astérix et Obélix sur Netflix. J'aimais bien les bandes dessinées quand j'étais petite.

Après l'école, j'ai emmené ma fille au Stockyards pour acheter de l'élastique chez Fabric Fabric pour son maillot-robe. Nous avons aussi cherché des chaussures chez The Shoe Company, Children's Place, Old Navy et Walmart, mais elle n'a rien trouvé qui lui ait plu.

Ensuite, nous avons travaillé sur son maillot-robe.

Pendant que nous regardions Pokémon, j'ai remarqué que même Jessie a montré une belle évolution. Ma fille m'a demandé si je faisais pareil. Je n'ai pas compris, donc je lui ai demandé ce qu'elle voulait dire. Elle est partie grincheuse. Je ne sais pas, mais je ne peux pas lire dans ses pensées.

Sur Stardew, j'ai planté le reste des fraises et j'ai engagé le service Ridgeside Odd Jobs pour arroser toutes les plantes dehors. J'ai attendu l'amélioration de ma poêle pour terminer le dernier paquet parce que nous jouions avec les mods Stardew Valley Expanded (qui demande une friandise) et Love of Cooking (qui demande l'amélioration pour augmenter la limite du nombre d'aliments).

samedi 9

Mon mari, ma fille et moi sommes allés au centre-ville pour le Science Rendezvous, un festival scientifique. Ma fille s'est beaucoup amusée. Elle a aimé peindre avec des plantes en utilisant des peintures dérivées du curcuma, des betteraves, des épinards, et du chou rouge. Elle s'est aussi intéressée aux bulles qui contiennent du dioxyde de carbone provenant de la neige carbonique.

Sur le chemin du retour, ma fille et moi sommes passées à la pâtisserie chinoise pour des petits pains.

dimanche 10

Ma fille m'a réveillée et elle m'a donné une carte de fête des Mères. Elle a aussi préparé une omelette de 6 œufs pour que l'on se régale.

Mon mari a amélioré mon bureau. Il a coupé une autre étagère et il l'a attachée à mon bureau comme plateau. C'était très pratique. Maintenant je peux placer plus de choses sur mon bureau.

Sur Stardew Valley, ma fille et moi nous sommes amusées à explorer la Caverne du Crâne. Elle a oublié d'apporter de la nourriture, donc je lui ai donné plusieurs fromages.